Lots of our customers implement operational reporting and analytics on DynamoDB utilizing Rockset as a SQL intelligence layer to serve reside dashboards and purposes. As an engineering group, we’re consistently trying to find alternatives to enhance their SQL-on-DynamoDB expertise.

For the previous few weeks, now we have been arduous at work tuning the efficiency of our DynamoDB ingestion course of. Step one on this course of was diving into DynamoDB’s documentation and doing a little experimentation to make sure that we have been utilizing DynamoDB’s learn APIs in a method that maximizes each the soundness and efficiency of our system.

Background on DynamoDB APIs

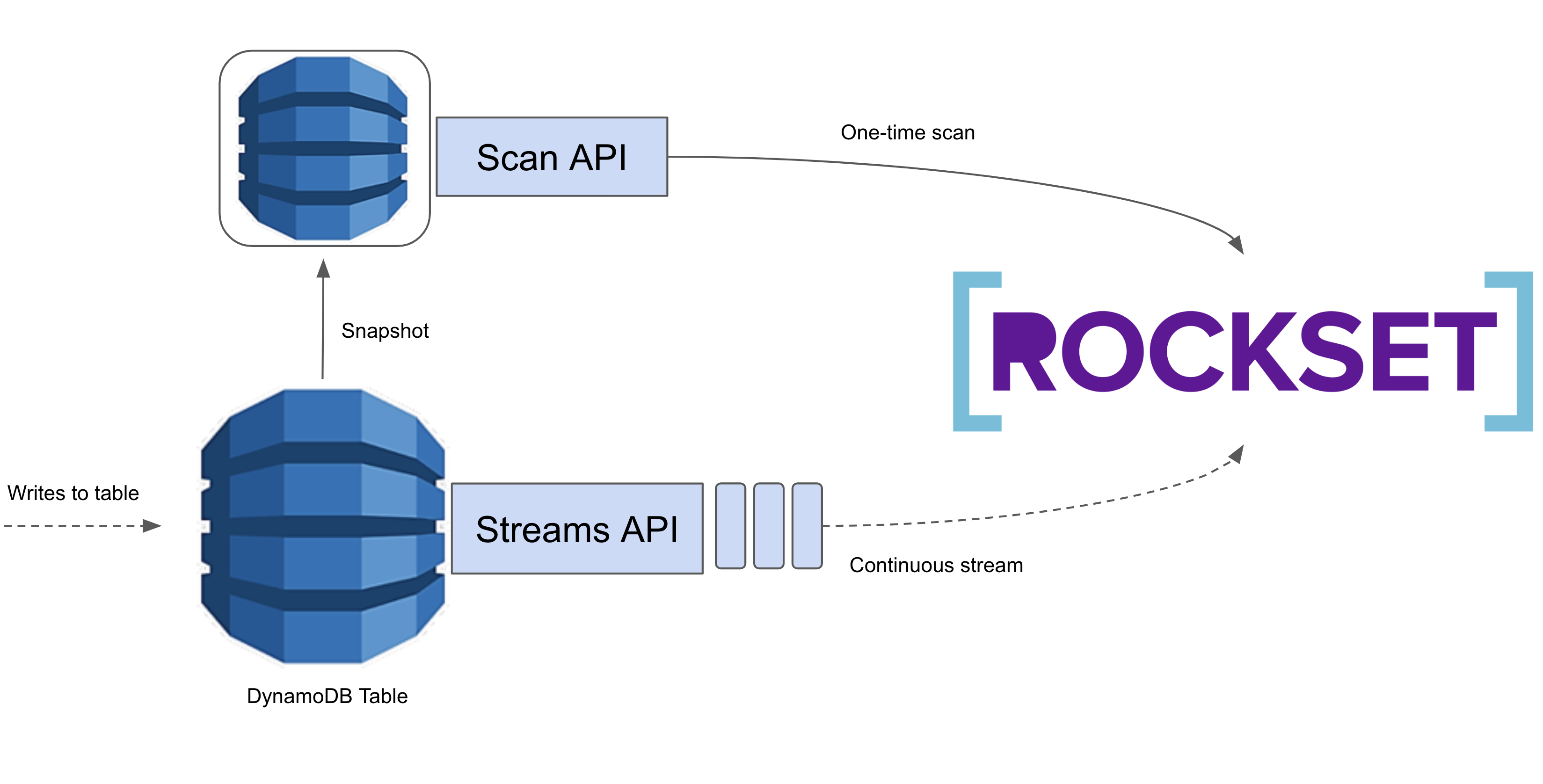

AWS provides a Scan API and a Streams API for studying information from DynamoDB. The Scan API permits us to linearly scan a whole DynamoDB desk. That is costly, however generally unavoidable. We use the Scan API the primary time we load information from a DynamoDB desk to a Rockset assortment, as now we have no technique of gathering all the info apart from scanning by it. After this preliminary load, we solely want to watch for updates, so utilizing the Scan API can be fairly wasteful. As an alternative, we use the Streams API which supplies us a time-ordered queue of updates utilized to the DynamoDB desk. We learn these updates and apply them into Rockset, giving customers realtime entry to their DynamoDB information in Rockset!

The problem we’ve been enterprise is to make ingesting information from DynamoDB into Rockset as seamless and cost-efficient as potential given the constraints offered by information sources, like DynamoDB. Following, I’ll talk about just a few of points we bumped into in tuning and stabilizing each phases of our DynamoDB ingestion course of whereas maintaining prices low for our customers.

Scans

How we measure scan efficiency

Through the scanning part, we goal to persistently maximize our learn throughput from DynamoDB with out consuming greater than a user-specified variety of RCUs per desk. We wish ingesting information into Rockset to be environment friendly with out interfering with current workloads working on customers’ reside DynamoDB tables.

Understanding set scan parameters

From very preliminary testing, we seen that our scanning part took fairly a very long time to finish so we did some digging to determine why. We ingested a DynamoDB desk into Rockset and noticed what occurred throughout the scanning part. We anticipated to persistently eat the entire provisioned throughput.

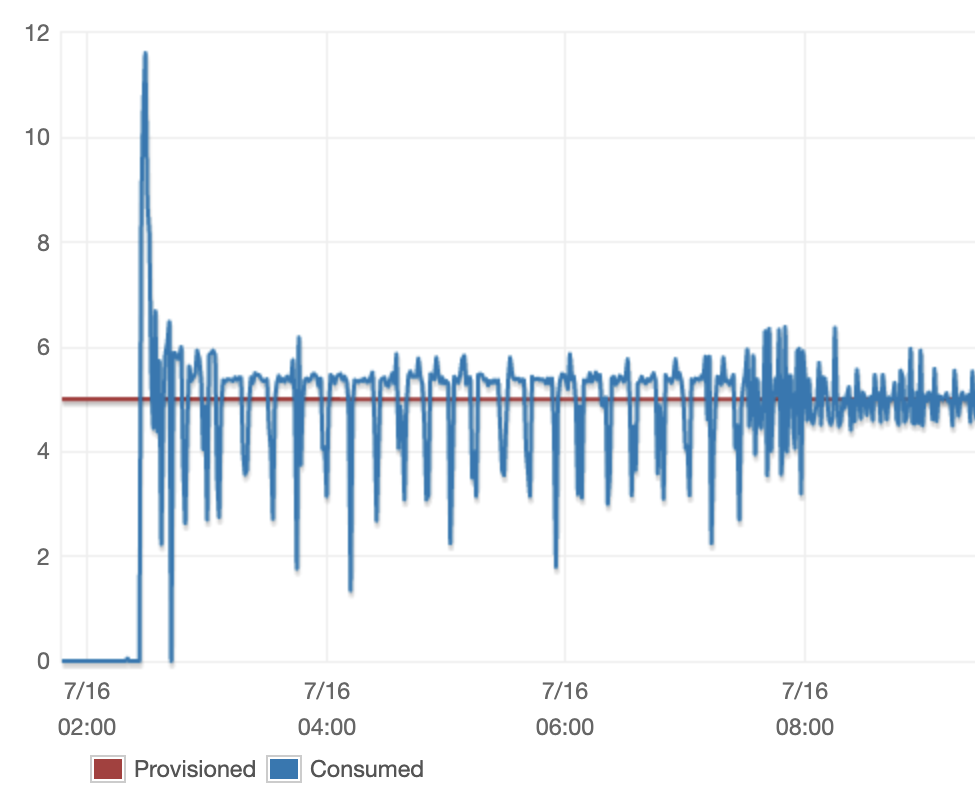

Initially, our RCU consumption appeared like the next:

We noticed an inexplicable stage of fluctuation within the RCU consumption over time, notably within the first half of the scan. These fluctuations are unhealthy as a result of every time there’s a serious drop within the throughput, we find yourself lengthening the ingestion course of and growing our customers DynamoDB prices.

The issue was clear however the underlying trigger was not apparent. On the time, there have been just a few variables that we have been controlling fairly naively. DynamoDB exposes two necessary variables: web page measurement and phase depend, each of which we had set to mounted values. We additionally had our personal price limiter which throttled the variety of DynamoDB Scan API calls we made. We had additionally set the restrict this price limiter was implementing to a hard and fast worth. We suspected that one in every of these variables being sub-optimally configured was the seemingly reason for the large fluctuations we have been observing.

Some investigation revealed that the reason for the fluctuation was primarily the speed limiter. It turned out the mounted restrict we had set on our price limiter was too low, so we have been getting throttled too aggressively by our personal price limiter. We determined to repair this downside by configuring our limiter primarily based on the quantity of RCU allotted to the desk. We will simply (and do plan to) transition to utilizing a user-specified variety of RCU for every desk, which can permit us to restrict Rockset’s RCU consumption even when customers have RCU autoscaling enabled.

public int getScanRateLimit(AmazonDynamoDB shopper, String tableName,

int numSegments) {

TableDescription tableDesc = shopper.describeTable(tableName).getTable();

// Word: it will return 0 if the desk has RCU autoscaling enabled

ultimate lengthy tableRcu = tableDesc.getProvisionedThroughput().getReadCapacityUnits();

ultimate int numSegments = config.getNumSegments();

return desiredRcuUsage / numSegments;

}

For every phase, we carry out a scan, consuming capability on our price limiter as we eat DynamoDB RCU’s.

public void doScan(AmazonDynamoDb shopper, String tableName, int numSegments) {

RateLimiter rateLimiter = RateLimiter.create(getScanRateLimit(shopper,

tableName, numSegments))

whereas (!carried out) {

ScanResult consequence = shopper.scan(/* feed scan request in */);

// do processing ...

rateLimiter.purchase(consequence.getConsumedCapacity().getCapacityUnits());

}

}

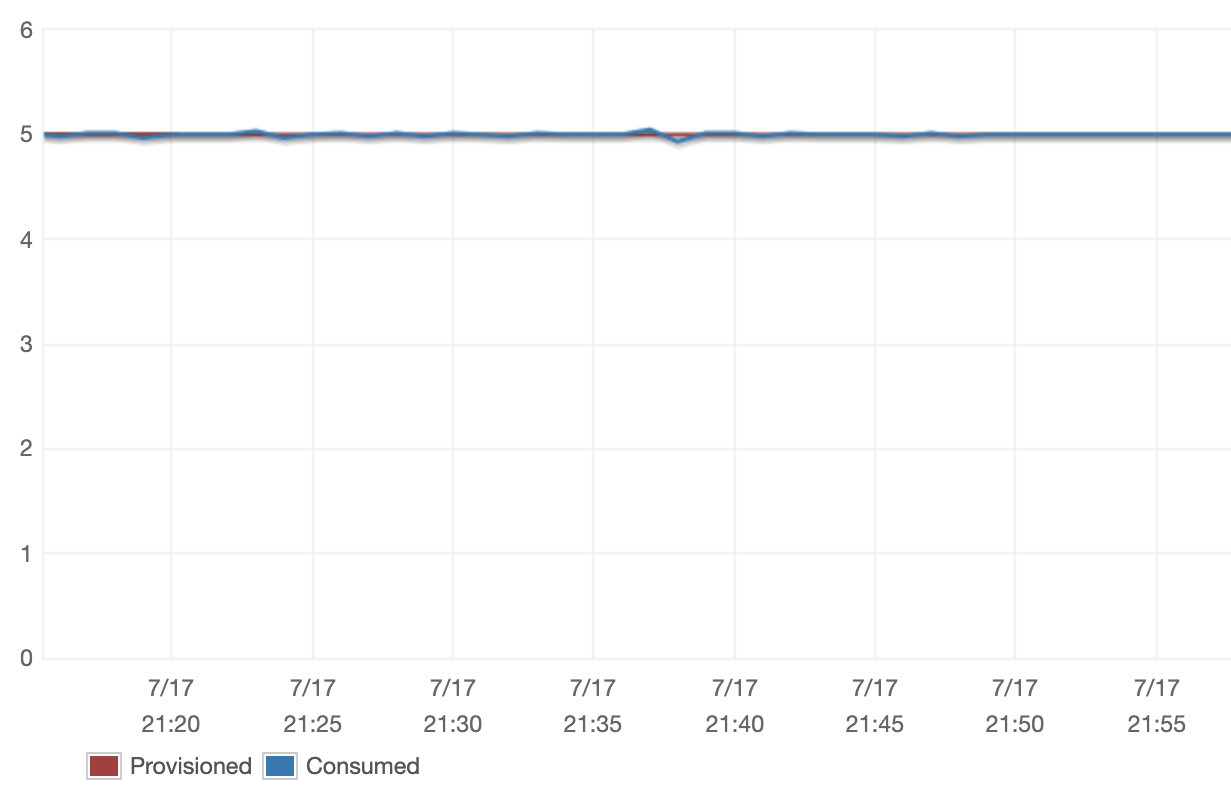

The results of our new Scan configuration was the next:

We have been completely happy to see that, with our new configuration, we have been in a position to reliably management the quantity of throughput we consumed. The issue we found with our price limiter delivered to mild our underlying want for extra dynamic DynamoDB Scan configurations. We’re persevering with to run experiments to find out dynamically set the web page measurement and phase depend primarily based on table-specific information, however we additionally moved onto coping with a number of the challenges we have been dealing with with DynamoDB Streams.

Streams

How we measure streaming efficiency

Our aim throughout the streaming part of ingestion is to reduce the period of time it takes for an replace to enter Rockset after it’s utilized in DynamoDB whereas maintaining the price utilizing Rockset as little as potential for our customers. The first value issue for DynamoDB Streams is the variety of API calls we make. DynamoDB’s pricing permits customers 2.5 million free API calls and fees $0.02 per 100,000 requests past that. We need to attempt to keep as near the free tier as potential.

Beforehand we have been querying DynamoDB at a price of ~300 requests/second as a result of we encountered numerous empty shards within the streams we have been studying. We believed that we’d must iterate by all of those empty shards whatever the price we have been querying at. To mitigate the load we placed on customers’ Dynamo tables (and in flip their wallets), we set a timer on these reads after which stopped studying for five minutes if we didn’t discover any new information. Provided that this mechanism ended up charging customers who didn’t even have a lot information in DynamoDB and nonetheless had a worst case latency of 5 minutes, we began investigating how we may do higher.

Lowering the frequency of streaming calls

We ran a number of experiments to make clear our understanding of the DynamoDB Streams API and decide whether or not we may scale back the frequency of the DynamoDB Streams API calls our customers have been being charged for. For every experiment, we various the period of time we waited between API calls and measured the typical period of time it took for an replace to a DynamoDB desk to be mirrored in Rockset.

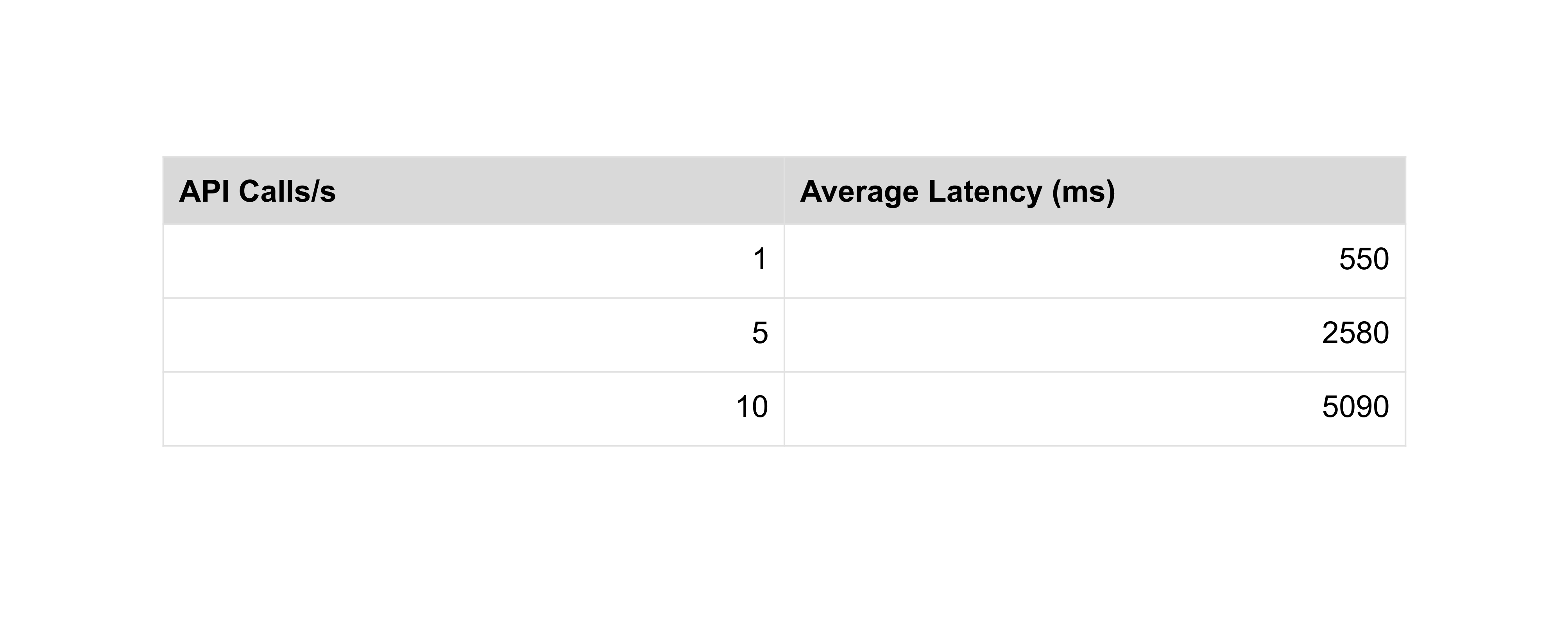

Inserting information into the DynamoDB desk at a continuing price of two information/second, the outcomes have been as follows:

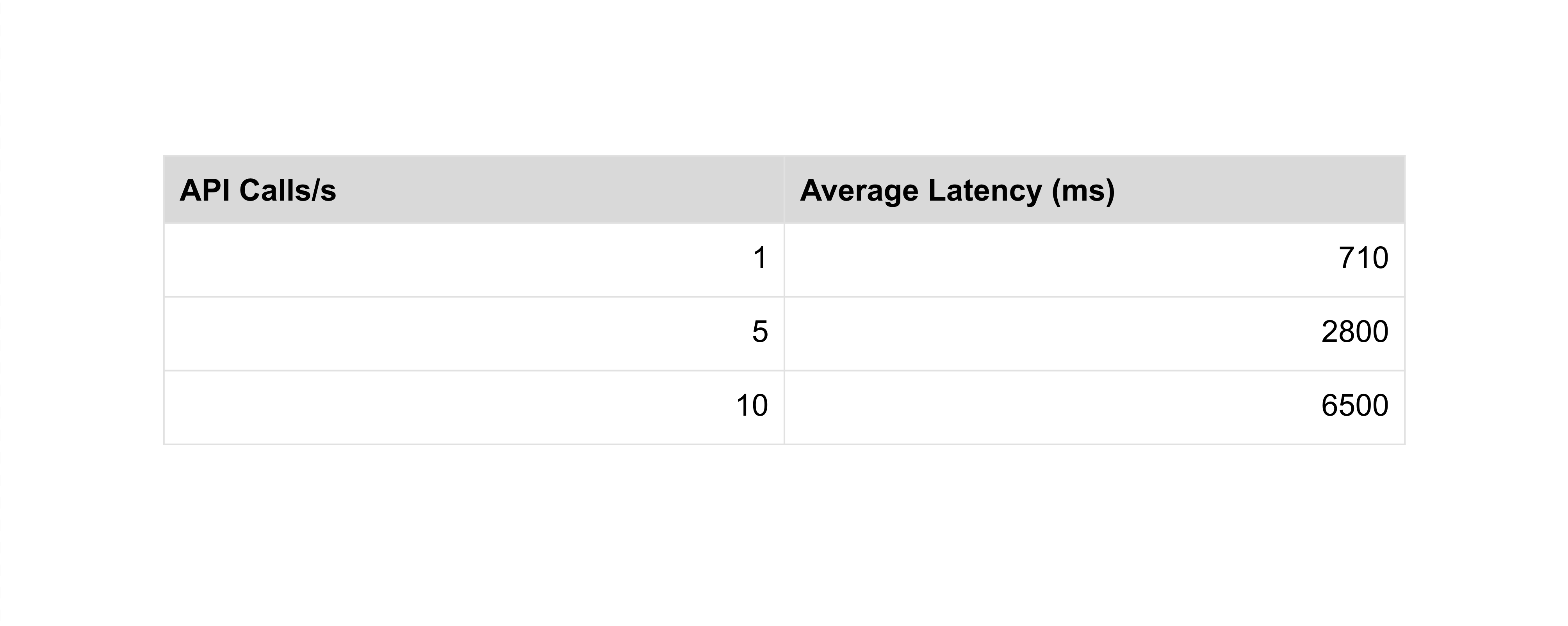

Inserting information into the DynamoDB desk in a bursty sample, the outcomes have been as follows:

The outcomes above confirmed that making 1 API name each second is a lot to make sure that we keep sub-second latencies. Our preliminary assumptions have been fallacious, however these outcomes illuminated a transparent path ahead. We promptly modified our ingestion course of to question DynamoDB Streams for brand new information solely as soon as per second so as give us the efficiency we’re in search of at a a lot diminished value to our customers.

Calculating our value discount

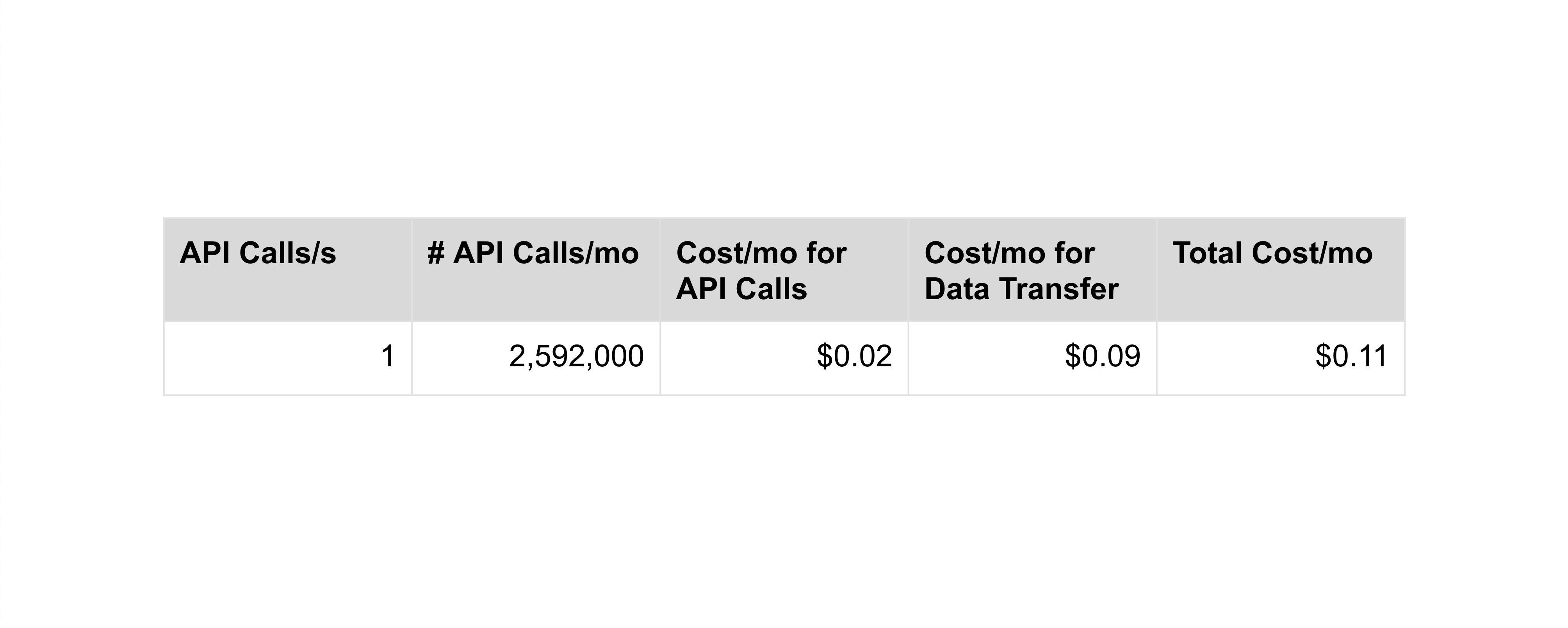

Since with DynamoDB Streams we’re immediately chargeable for our customers prices, we determined that we would have liked to exactly calculate the price our customers incur as a result of method we use DynamoDB Streams. There are two components which wholly decide the quantity that customers will probably be charged for DynamoDB Streams: the variety of Streams API calls made and the quantity of knowledge transferred. The quantity of knowledge transferred is essentially past our management. Every API name response unavoidably transfers a small quantity (768 bytes) of knowledge. The remaining is all consumer information, which is simply learn into Rockset as soon as. We targeted on controlling the variety of DynamoDB Streams API calls we make to customers’ tables as this was beforehand the driving force of our customers’ DynamoDB prices.

Following is a breakdown of the price we estimate with our newly reworked ingestion course of:

We have been completely happy to see that, with our optimizations, our customers ought to incur nearly no further value on their DynamoDB tables resulting from Rockset!

Conclusion

We’re actually excited that the work we’ve been doing has efficiently pushed DynamoDB prices down for our customers whereas permitting them to work together with their DynamoDB information in Rockset in realtime!

It is a simply sneak peek into a number of the challenges and tradeoffs we’ve confronted whereas working to make ingesting information from DynamoDB into Rockset as seamless as potential. If you happen to’re fascinated about studying extra about operationalize your DynamoDB information utilizing Rockset take a look at a few of our current materials and keep tuned for updates as we proceed to construct Rockset out!

If you would like to see Rockset and DynamoDB in motion, you need to take a look at our transient product tour.

Different DynamoDB sources: