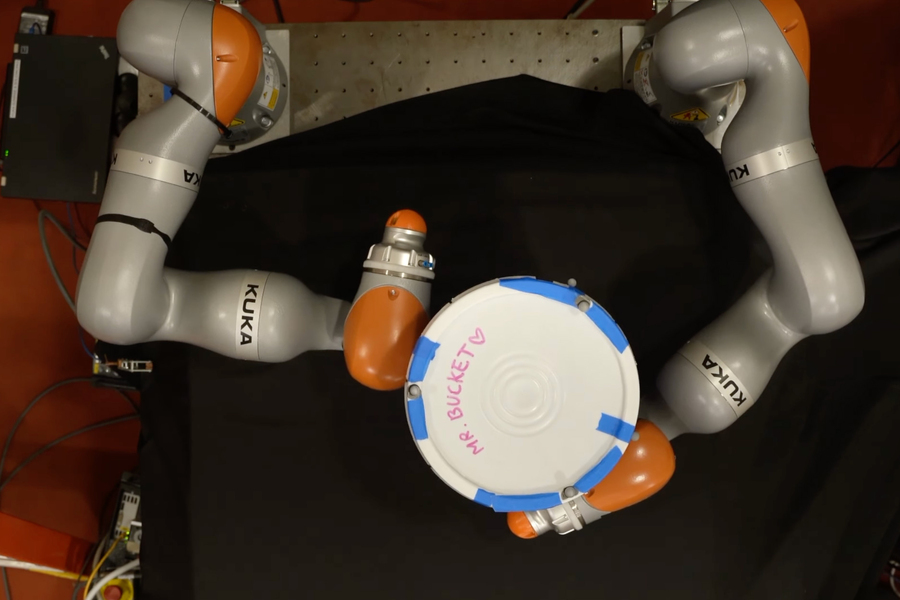

MIT researchers developed an AI approach that permits a robotic to develop advanced plans for manipulating an object utilizing its whole hand, not simply the fingertips. This mannequin can generate efficient plans in a few minute utilizing a regular laptop computer. Right here, a robotic makes an attempt to rotate a bucket 180 levels. Picture: Courtesy of the researchers

By Adam Zewe | MIT Information

Think about you wish to carry a big, heavy field up a flight of stairs. You would possibly unfold your fingers out and carry that field with each arms, then maintain it on high of your forearms and stability it in opposition to your chest, utilizing your complete physique to control the field.

People are usually good at whole-body manipulation, however robots battle with such duties. To the robotic, every spot the place the field may contact any level on the provider’s fingers, arms, and torso represents a contact occasion that it should motive about. With billions of potential contact occasions, planning for this process rapidly turns into intractable.

Now MIT researchers discovered a option to simplify this course of, often called contact-rich manipulation planning. They use an AI approach referred to as smoothing, which summarizes many contact occasions right into a smaller variety of choices, to allow even a easy algorithm to rapidly establish an efficient manipulation plan for the robotic.

Whereas nonetheless in its early days, this technique may probably allow factories to make use of smaller, cellular robots that may manipulate objects with their whole arms or our bodies, somewhat than massive robotic arms that may solely grasp utilizing fingertips. This may occasionally assist cut back power consumption and drive down prices. As well as, this method may very well be helpful in robots despatched on exploration missions to Mars or different photo voltaic system our bodies, since they might adapt to the surroundings rapidly utilizing solely an onboard pc.

“Slightly than eager about this as a black-box system, if we are able to leverage the construction of those sorts of robotic methods utilizing fashions, there is a chance to speed up the entire process of making an attempt to make these choices and provide you with contact-rich plans,” says H.J. Terry Suh, {an electrical} engineering and pc science (EECS) graduate scholar and co-lead writer of a paper on this method.

Becoming a member of Suh on the paper are co-lead writer Tao Pang PhD ’23, a roboticist at Boston Dynamics AI Institute; Lujie Yang, an EECS graduate scholar; and senior writer Russ Tedrake, the Toyota Professor of EECS, Aeronautics and Astronautics, and Mechanical Engineering, and a member of the Laptop Science and Synthetic Intelligence Laboratory (CSAIL). The analysis seems this week in IEEE Transactions on Robotics.

Studying about studying

Reinforcement studying is a machine-learning approach the place an agent, like a robotic, learns to finish a process by means of trial and error with a reward for getting nearer to a objective. Researchers say this sort of studying takes a black-box method as a result of the system should study all the things in regards to the world by means of trial and error.

It has been used successfully for contact-rich manipulation planning, the place the robotic seeks to study the easiest way to maneuver an object in a specified method.

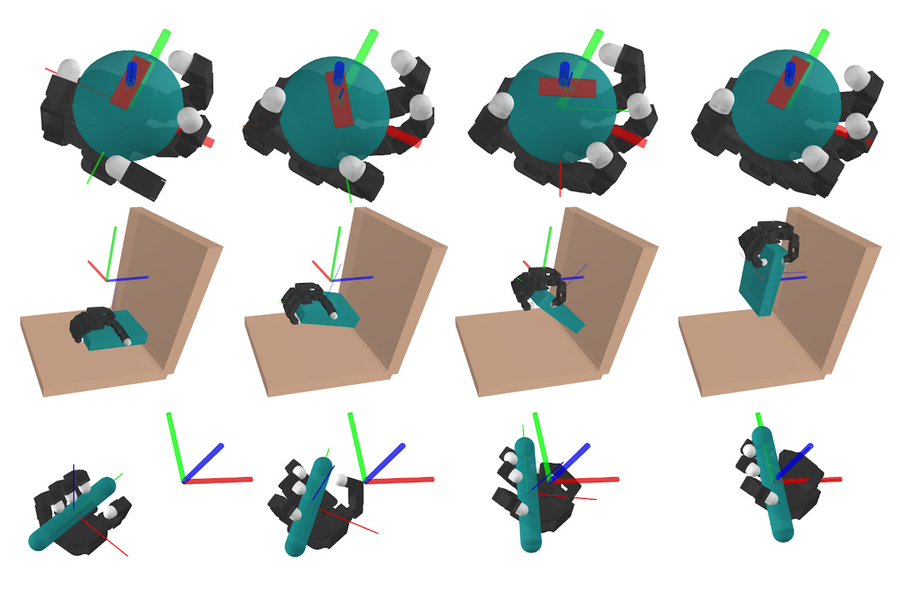

In these figures, a simulated robotic performs three contact-rich manipulation duties: in-hand manipulation of a ball, choosing up a plate, and manipulating a pen into a selected orientation. Picture: Courtesy of the researchers

However as a result of there could also be billions of potential contact factors {that a} robotic should motive about when figuring out the best way to use its fingers, arms, arms, and physique to work together with an object, this trial-and-error method requires a substantial amount of computation.

“Reinforcement studying could must undergo tens of millions of years in simulation time to really be capable of study a coverage,” Suh provides.

However, if researchers particularly design a physics-based mannequin utilizing their data of the system and the duty they need the robotic to perform, that mannequin incorporates construction about this world that makes it extra environment friendly.

But physics-based approaches aren’t as efficient as reinforcement studying with regards to contact-rich manipulation planning — Suh and Pang questioned why.

They performed an in depth evaluation and located {that a} approach often called smoothing permits reinforcement studying to carry out so effectively.

Most of the choices a robotic may make when figuring out the best way to manipulate an object aren’t vital within the grand scheme of issues. As an example, every infinitesimal adjustment of 1 finger, whether or not or not it ends in contact with the item, doesn’t matter very a lot. Smoothing averages away lots of these unimportant, intermediate choices, leaving a number of vital ones.

Reinforcement studying performs smoothing implicitly by making an attempt many contact factors after which computing a weighted common of the outcomes. Drawing on this perception, the MIT researchers designed a easy mannequin that performs an identical kind of smoothing, enabling it to deal with core robot-object interactions and predict long-term conduct. They confirmed that this method may very well be simply as efficient as reinforcement studying at producing advanced plans.

“If you realize a bit extra about your drawback, you may design extra environment friendly algorithms,” Pang says.

A successful mixture

Although smoothing drastically simplifies the selections, looking by means of the remaining choices can nonetheless be a troublesome drawback. So, the researchers mixed their mannequin with an algorithm that may quickly and effectively search by means of all potential choices the robotic may make.

With this mixture, the computation time was minimize right down to a few minute on a regular laptop computer.

They first examined their method in simulations the place robotic arms got duties like shifting a pen to a desired configuration, opening a door, or choosing up a plate. In every occasion, their model-based method achieved the identical efficiency as reinforcement studying, however in a fraction of the time. They noticed related outcomes once they examined their mannequin in {hardware} on actual robotic arms.

“The identical concepts that allow whole-body manipulation additionally work for planning with dexterous, human-like arms. Beforehand, most researchers mentioned that reinforcement studying was the one method that scaled to dexterous arms, however Terry and Tao confirmed that by taking this key thought of (randomized) smoothing from reinforcement studying, they’ll make extra conventional planning strategies work extraordinarily effectively, too,” Tedrake says.

Nonetheless, the mannequin they developed depends on an easier approximation of the true world, so it can’t deal with very dynamic motions, reminiscent of objects falling. Whereas efficient for slower manipulation duties, their method can’t create a plan that might allow a robotic to toss a can right into a trash bin, for example. Sooner or later, the researchers plan to boost their approach so it may deal with these extremely dynamic motions.

“Should you examine your fashions fastidiously and actually perceive the issue you are attempting to unravel, there are undoubtedly some good points you may obtain. There are advantages to doing issues which are past the black field,” Suh says.

This work is funded, partly, by Amazon, MIT Lincoln Laboratory, the Nationwide Science Basis, and the Ocado Group.

MIT Information