Scalability, efficiency and effectivity are the important thing issues behind Rockset’s design and structure. Right this moment, we’re thrilled to share a outstanding milestone in certainly one of these dimensions. A buyer workload achieved 20K queries per second (QPS) with a question latency (p95) of below 200ms, whereas constantly ingesting streaming knowledge, marking a major demonstration of the scalability of our methods. This technical weblog highlights the structure that paved the best way for this accomplishment.

Understanding real-time workloads

Excessive QPS is commonly essential for organizations that require real-time or near-real-time processing of a major quantity of queries. These can vary from on-line marketplaces that must deal with numerous buyer queries and product searches to retail platforms that want excessive QPS to serve personalised suggestions in actual time. In most of those real-time use circumstances, new knowledge by no means stops arriving and queries by no means cease both. A database that serves real-time analytical queries has to course of reads and writes concurrently.

- Scalability: So as serve the excessive quantity of incoming queries, with the ability to distribute the workload throughout a number of nodes and scaling horizontally as wanted is vital.

- Workload Isolation: When real-time knowledge ingestion and question workloads run on the the identical compute items, they immediately compete for assets. When knowledge ingestion has a flash flood second, your queries will decelerate or day trip making your utility flaky. When you might have a sudden sudden burst of queries, your knowledge will lag making your utility not so actual time anymore.

- Question Optimization: When knowledge sizes are giant you can’t afford to scan giant parts of your knowledge to answer queries, particularly when the QPS is excessive as effectively. Queries must closely leverage underlying indexes to cut back the quantity of compute wanted per question.

- Concurrency: Excessive question charges can result in competition for locks, inflicting efficiency bottlenecks or deadlocks. Implementing efficient concurrency management mechanisms is critical to take care of knowledge consistency and stop efficiency degradation.

- Knowledge Sharding and Distribution: Effectively sharding and distributing knowledge throughout a number of nodes is important for parallel processing and cargo balancing.

Let’s talk about every of the above factors in additional element and analyze how the Rockset structure helps.

How Rockset structure permits QPS scaling

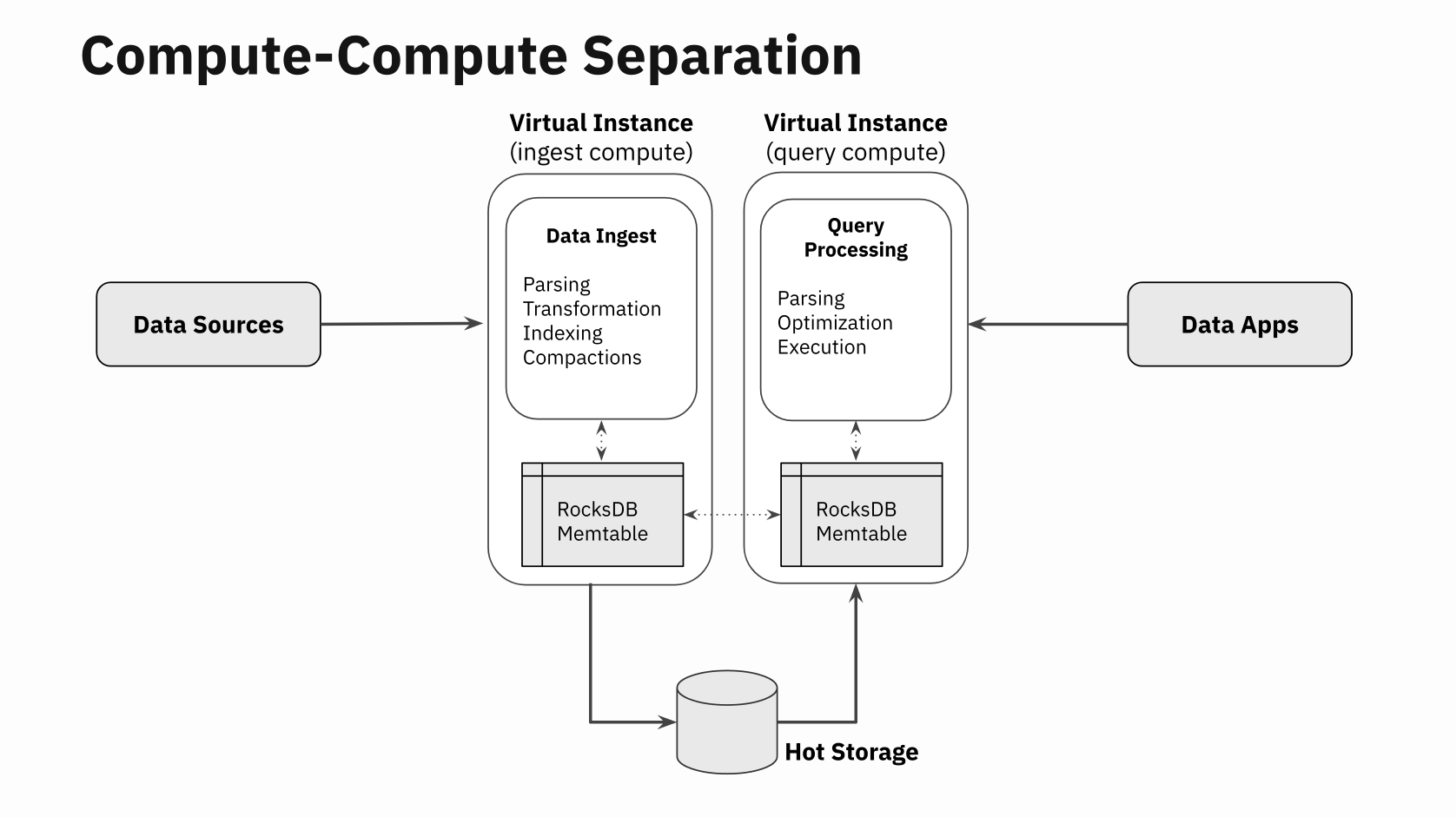

Scale: Rockset separates compute from storage. A Rockset Digital Occasion (VI) is a cluster of compute and cache assets. It’s utterly separate from the recent storage tier, an SSD-based distributed storage system that shops the person’s dataset. It serves requests for knowledge blocks from the software program working on the Digital Occasion. The essential requirement is that a number of Digital Situations can replace and browse the identical knowledge set residing on HotStorage. A knowledge-update made out of one Digital Occasion is seen on the opposite Digital Situations in a number of milliseconds.

Now, you may effectively think about how straightforward it’s to scale up or scale down the system. When the question quantity is low, simply use one Digital Occasion to serve queries. When the question quantity will increase spin up a brand new Digital Occasion and distribute the question load to all the present Digital Situations. These Digital Situations don’t want a brand new copy of the information, as an alternative all of them use the recent storage tier to fetch knowledge from. The truth that no knowledge replicas have to be made signifies that scale-up is quick and fast.

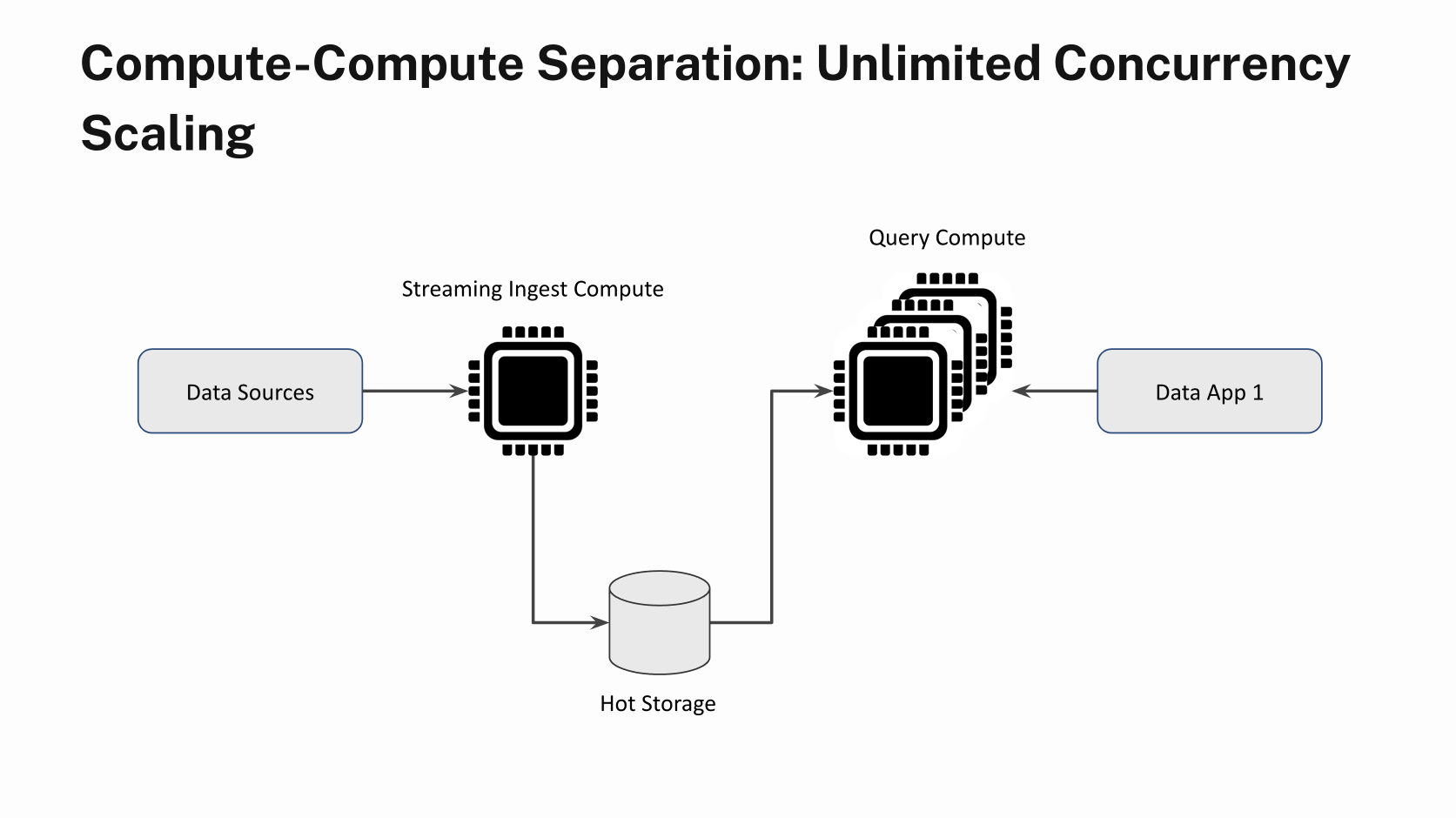

Workload Isolation: Each Digital Occasion in Rockset is totally remoted from another Digital Occasion. You’ll be able to have one Digital Occasion processing new writes and updating the recent storage, whereas a special Digital Occasion may be processing all of the queries. The good thing about that is {that a} bursty write system doesn’t influence question latencies. That is one purpose why p95 question latencies are saved low. This design sample is named Compute-Compute Separation.

Question Optimization: Rockset makes use of a Converged Index to slim down the question to course of the smallest sliver of information wanted for that question. This reduces the quantity of compute wanted per question, thus bettering QPS. It makes use of the open-source storage engine known as RocksDB to retailer and entry the Converged Index.

Concurrency: Rockset employs question admission management to take care of stability below heavy load in order that the system doesn’t attempt to run too many issues concurrently and worsen in any respect of them. It enforces this by way of what is named the Concurrent Question Execution Restrict that specifies the full variety of queries allowed to be processed concurrently and Concurrent Question Restrict that decides what number of queries that overflow from the execution restrict may be queued for execution.

That is particularly vital when the QPS is within the hundreds; if we course of all incoming queries concurrently, the variety of context switches and different overhead causes all of the queries to take longer. A greater method is to concurrently course of solely as many queries as wanted to maintain all of the CPUs at full throttle, and queue any remaining queries till there’s obtainable CPU. Rockset’s Concurrent Question Execution Restrict and Concurrent Question Restrict settings help you tune these queues primarily based in your workload.

Knowledge Sharding: Rockset makes use of doc sharding to unfold its knowledge on a number of nodes in a Digital Occasion. The only question can leverage compute from all of the nodes in a Digital Occasion. This helps with simplified load balancing, knowledge locality and improved question efficiency.

A peek into the client workload

Knowledge and queries: The dataset for this buyer was 4.5TB in measurement with a complete of 750M rows. Common doc measurement was ~9KB with combined varieties and a few deeply nested fields. The workload consists of two sort of queries:

choose * from collection_name the place processBy = :processBy

choose * from collection_name the place array_contains(emails, :electronic mail)

The predicate to the question is parameterized so that every run picks a special worth for the parameter at question time.

A Rockset Digital Occasion is a cluster of compute and cache and is available in T-shirt sizes. On this case, the workload makes use of a number of situations of 8XL-sized Digital Situations for queries and a single XL Digital Occasion to course of concurrent updates. An 8XL has 256 vCPUs whereas a XL has 32 vCPUs.

Here’s a pattern doc. Be aware the deep ranges of nesting in these paperwork. Not like different OLAP databases, we don’t must flatten these paperwork whenever you retailer them in Rockset. And the question can entry any subject within the nested doc with out impacting QPS.

Updates: A steady stream of updates to present information stream in at about 10 MB/sec. This replace stream is constantly processed by a XL Digital Occasion. The updates are seen to all Digital Situations on this setup inside a number of milliseconds. A separate set of Digital Situations are used to course of the question load as described under.

Demonstrating QPS scaling linearly with compute assets

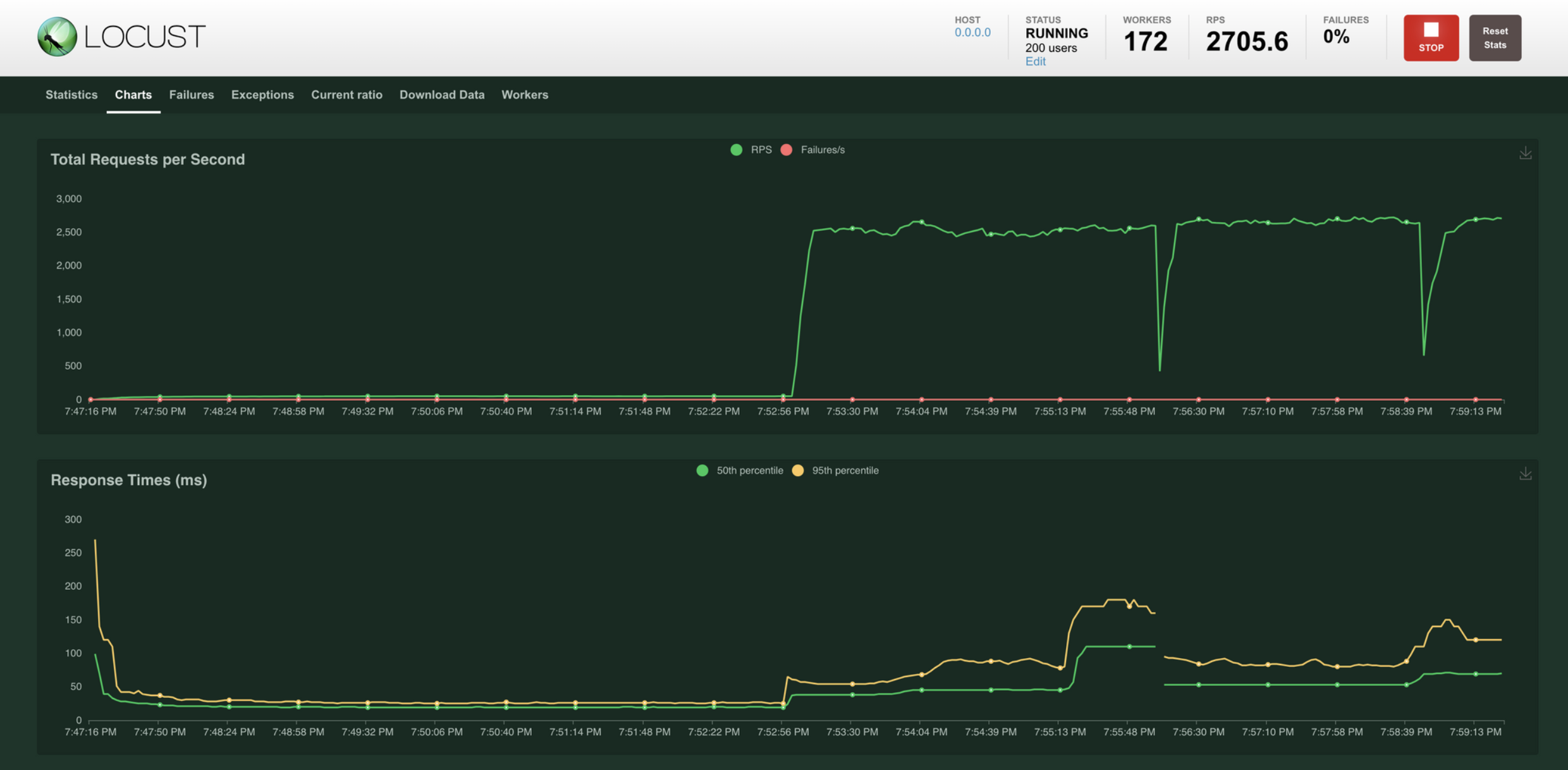

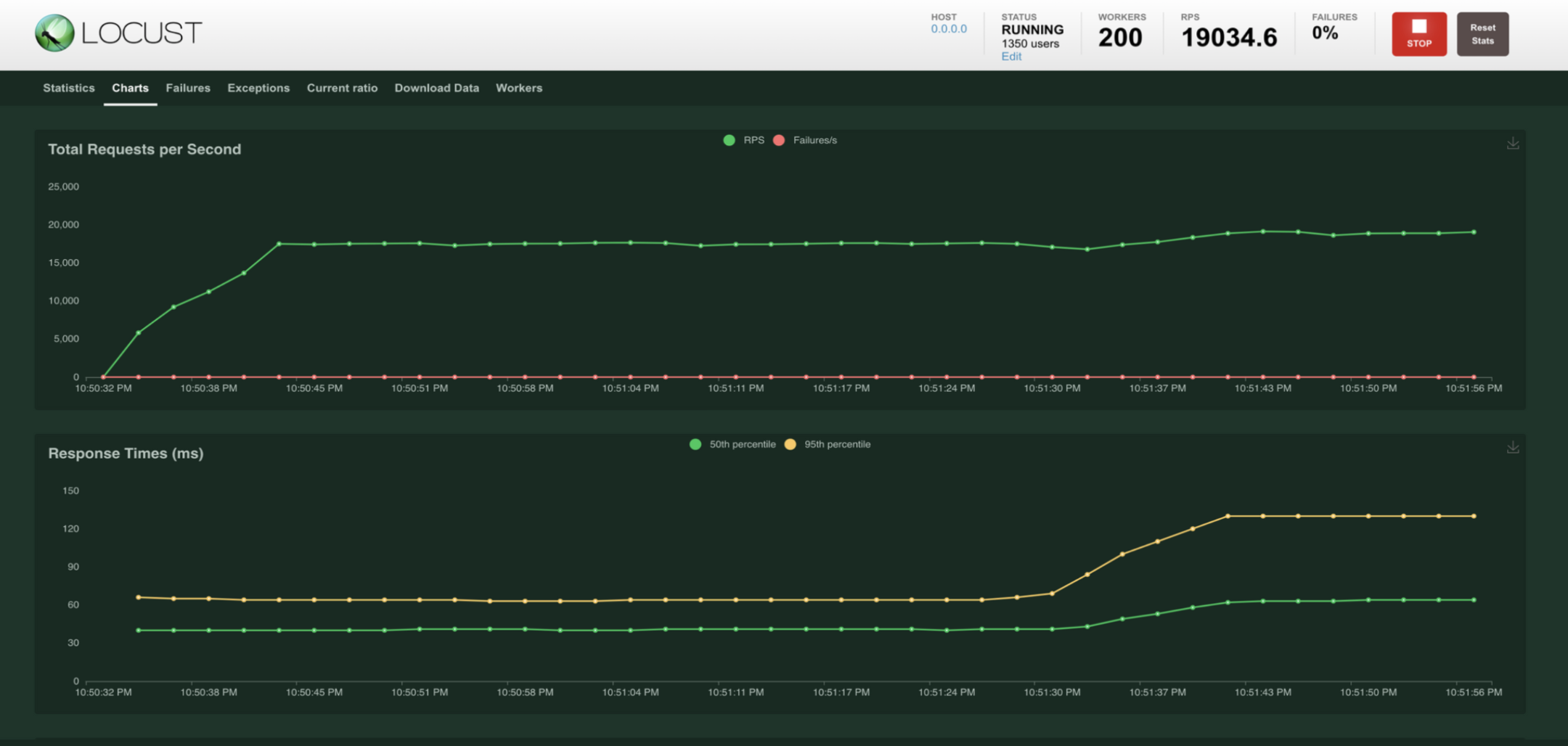

A distributed question generator primarily based on Locust was used to drive as much as 20K QPS on the client dataset. Beginning with a single 8XL digital occasion, we noticed that it sustained round 2700 QPS at sub-200ms p95 question latency.

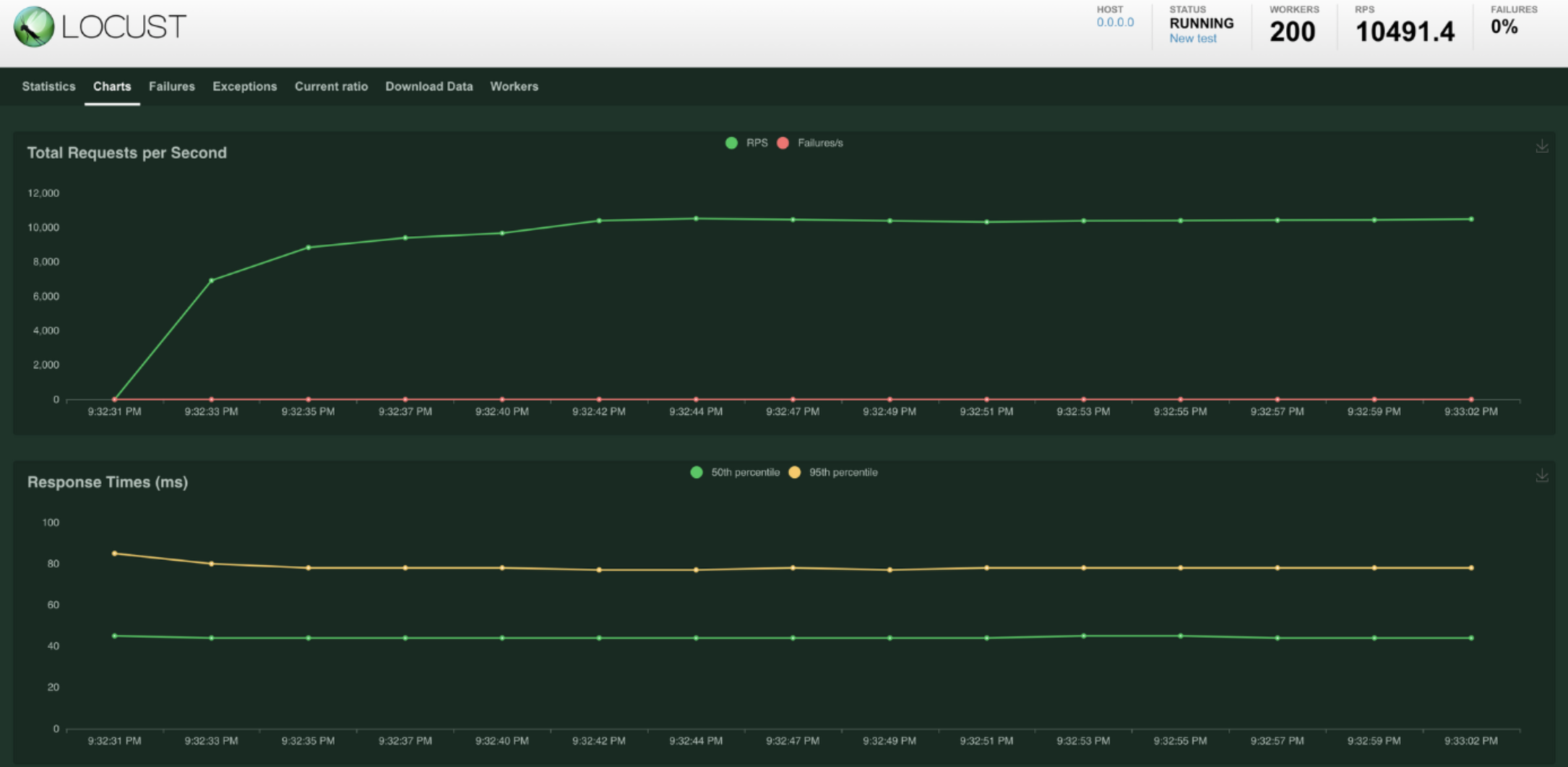

After scaling out to 4 8XL Digital Situations, we noticed that it sustained round 10K QPS at sub-200ms p95 question latency.

And after scaling to eight 8XL Digital Situations, we noticed that it continued to scale linearly and sustained round 19K QPS at sub-200ms p95!!

Knowledge freshness

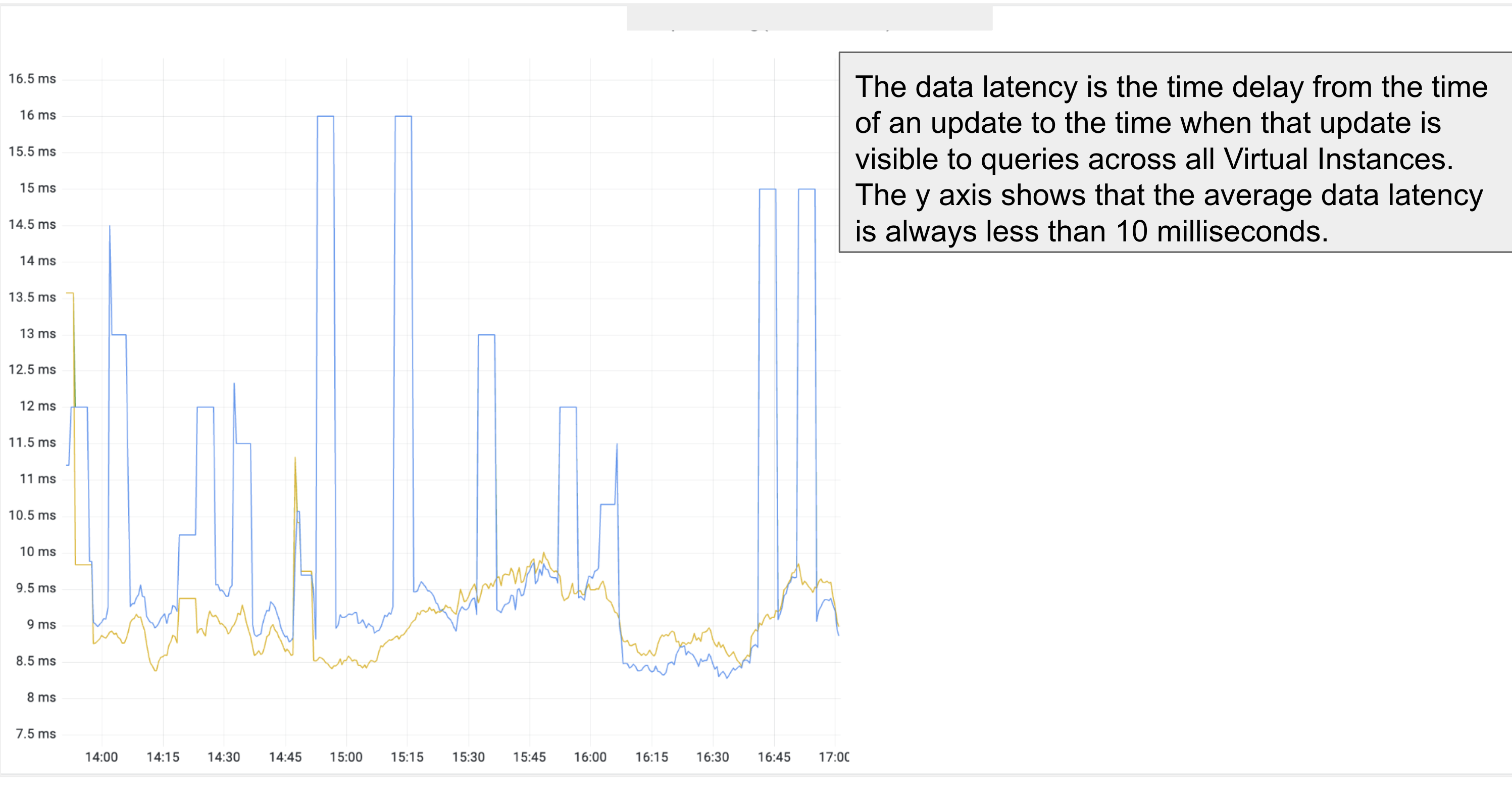

The information updates are occurring on one Digital Occasion and the queries are occurring on eight completely different Digital Situations. So, the pure query that arises is, “Are the updates seen on all Digital Situations, and if that’s the case, how lengthy does it take for the updates to be seen in queries?”

The information freshness metric, additionally known as the information latency, throughout all of the Digital Situations is in single-digit milliseconds as proven within the graph above. This can be a true measure of the realtime attribute of Rockset at excessive writes and excessive QPS!

Takeaways

The outcomes present that Rockset can attain near-linear QPS scale-up: it’s as straightforward as creating new Digital Situations and spreading out the question load to all of the Digital Situations. There is no such thing as a must make replicas of information. And on the identical time, Rockset continues to course of updates concurrently. We’re excited concerning the potentialities that lie forward as we proceed to push the boundaries of what’s doable with excessive QPS.