Introduction

The bin packing drawback is a basic optimization problem that has far-reaching implications for enterprise organizations throughout industries. At its core, the issue focuses on discovering probably the most environment friendly method to pack a set of objects right into a finite variety of containers or “bins”, with the aim of minimizing wasted house.

This problem is pervasive in real-world functions, from optimizing delivery and logistics to effectively allocating sources in knowledge facilities and cloud computing environments. With organizations typically coping with giant numbers of things and containers, discovering optimum packing options can result in vital price financial savings and operational efficiencies.

For a number one $10B industrial tools producer, bin packing is an integral a part of their provide chain. It’s common for this firm to ship containers to distributors to fill with bought components which are then used within the manufacturing technique of heavy tools and autos. With the growing complexity of provide chains and variable manufacturing targets, the packaging engineering group wanted to make sure meeting traces have the correct variety of components obtainable whereas effectively utilizing house.

For instance, an meeting line wants ample metal bolts on-hand so manufacturing by no means slows, however it’s a waste of manufacturing unit flooring house to have a delivery container stuffed with them when only some dozen are wanted per day. Step one in fixing this drawback is bin packing, or modeling how hundreds of components slot in all of the doable containers, so engineers can then automate the method of container choice for improved productiveness.

| Problem ❗Wasted house in packaging containers ❗Extreme truck loading & carbon footprint |

Goal ✅ Reduce empty house in packaging container ✅ Maximize truck loading capability to scale back carbon footprint |

|---|---|

|

|

Technical Challenges

Whereas the bin packing drawback has been extensively studied in an instructional setting, effectively simulating and fixing it throughout advanced real-world datasets and at scale has remained a problem for a lot of organizations.

In some sense, this drawback is straightforward sufficient for anybody to grasp: put issues in a field till full. However as with most large knowledge issues, challenges come up due to the sheer scale of the computations to be carried out. For this Databricks buyer’s bin packing simulation, we are able to use a easy psychological mannequin for the optimization job. Utilizing pseudocode:

For (i in gadgets): The method wants to run for each merchandise in stock (~1,000’s)

↳ For (c in containers): Strive the match for each kind of container (~10’s)

↳ For (o in orientations): The beginning orientations of the first merchandise should every be modeled (==6)

↳ Pack_container Lastly, attempt filling a container with gadgets with a beginning orientationWhat if we had been to run this looping course of sequentially utilizing single-node Python? If we now have hundreds of thousands of iterations (e.g. 20,000 gadgets x 20 containers x 6 beginning orientations = 2.4M combos), this might take a whole lot of hours to compute (e.g. 2.4M combos x 1 second every / 3600 seconds per hour = ~660 hours = 27 days). Ready for practically a month for these outcomes, that are themselves an enter to a later modeling step, is untenable: we should give you a extra environment friendly method to compute somewhat than a serial/sequential course of.

Scientific Computing With Ray

As a computing platform, Databricks has at all times supplied help for these scientific computing use-cases, however scaling them poses a problem: most optimization and simulation libraries are written assuming a single-node processing atmosphere, and scaling them with Spark requires expertise with instruments similar to Pandas UDFs.

With Ray’s common availability on Databricks in early 2024, clients have a brand new device of their scientific computing toolbox to scale advanced optimization issues. Whereas additionally supporting superior AI capabilities like reinforcement studying and distributed ML, this weblog focuses on Ray Core to reinforce customized Python workflows that require nesting, advanced orchestration, and communication between duties.

Modeling a Bin Packing Downside

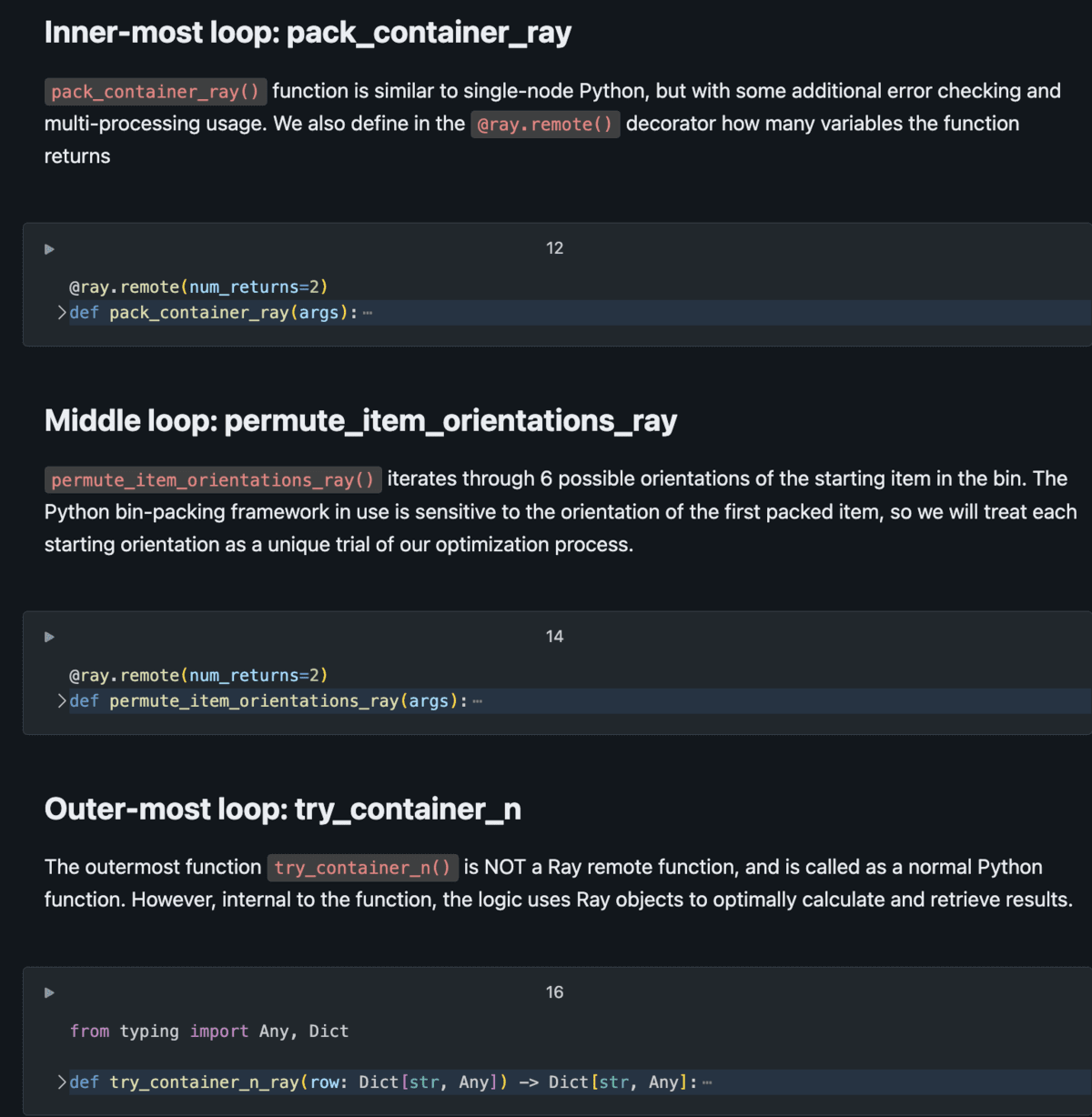

To successfully use Ray to scale scientific computing, the issue have to be logically parallelizable. That’s, should you can mannequin an issue as a collection of concurrent simulations or trials to run, Ray may help scale it. Bin packing is a superb match for this, as one can check totally different gadgets in several containers in several orientations all on the identical time. With Ray, this bin packing drawback could be modeled as a set of nested distant features, permitting hundreds of concurrent trials to run concurrently, with the diploma of parallelism restricted by the variety of cores in a cluster.

The diagram beneath demonstrates the essential setup of this modeling drawback.

The Python script consists of nested duties, the place outer duties name the inside duties a number of occasions per iteration. Utilizing distant duties (as an alternative of regular Python features), we now have the power to massively distribute these duties throughout the cluster with Ray Core managing the execution graph and returning outcomes effectively. See the Databricks Answer Accelerator scientific-computing-ray-on-spark for full implementation particulars.

Efficiency & Outcomes

With the methods described on this weblog and demonstrated within the related Github repo, this buyer was capable of:

- Scale back container choice time: The adoption of the 3D bin packing algorithm marks a major development, providing an answer that’s not solely extra correct but additionally significantly quicker, decreasing the time required for container choice by an element of 40x as in comparison with legacy processes.

- Scale the method linearly: with Ray, the time to complete the modeling course of could be linearly scaled with the variety of cores in our cluster. Taking the instance with 2.4 million combos from the highest (that might have taken 660 hours to finish on a single thread): if we would like the method to run in a single day in 12 hours, we’d like: 2.4M / (12hr x 3600sec) = 56 cores; to finish in 3 hours, we would want 220 cores. On Databricks, that is simply managed through a cluster configuration.

- Considerably cut back code complexity: Ray streamlines code complexity, providing a extra intuitive various to the unique optimization job constructed with Python’s multiprocessing and threading libraries. The earlier implementation required intricate information of those libraries attributable to nested logic constructions. In distinction, Ray’s method simplifies the codebase, making it extra accessible to knowledge group members. The ensuing code is just not solely simpler to grasp but additionally aligns extra intently with idiomatic Python practices, enhancing total maintainability and effectivity.

Extensibility for Scientific Computing

The mix of automation, batch processing, and optimized container choice has led to measurable enhancements for this industrial producer, together with a major discount in delivery and packaging prices, and a dramatic enhance in course of effectivity. With the bin packing drawback dealt with, knowledge group members are shifting on to different domains of scientific computing for his or her enterprise, together with optimization and linear-programming centered challenges. The capabilities supplied by the Databricks Lakehouse platform provide a possibility to not solely mannequin new enterprise issues for the primary time, but additionally dramatically enhance legacy scientific computing methods which were in use for years.

In tandem with Spark, the de facto customary for knowledge parallel duties, Ray may help make any “logic-parallel” drawback extra environment friendly. Modeling processes which are purely depending on the quantity of compute obtainable are a robust device for companies to create data-driven companies.

See the Databricks Answer Accelerator scientific-computing-ray-on-spark.