We’re excited to announce that Databricks now helps Amazon EC2 G6 cases powered by NVIDIA L4 Tensor Core GPUs. This addition marks a step ahead in enabling extra environment friendly and scalable information processing, machine studying, and AI workloads on the Databricks Knowledge Intelligence Platform.

Why AWS G6 GPU Cases?

Amazon Net Providers (AWS) G6 cases are powered by lower-cost, energy-efficient NVIDIA L4 GPUs. Primarily based on NVIDIA’s 4th gen tensor core Ada Lovelace structure, these GPUs provide help for essentially the most demanding AI and machine studying workloads:

- G6 cases ship as much as 2x increased efficiency for deep studying inference and graphics workloads in comparison with G4dn cases that run on NVIDIA T4 GPUs.

- G6 cases have twice the compute energy however require solely half the reminiscence bandwidth of G5 cases powered by NVIDIA A10G Tensor Core GPUs. (Word: Most LLM and different autoregressive transformer mannequin inference tends to be memory-bound, which means that the A10G should be a more sensible choice for purposes resembling chat, however the L4 is performance-optimized for inference on compute-bound workloads.

Use Circumstances: Accelerating Your AI and Machine Studying Workflows

- Deep Studying inference: The L4 GPU is optimized for batch inference workloads, offering a steadiness between excessive computational energy and vitality effectivity. It affords wonderful help for TensorRT and different inference-optimized libraries, which assist cut back latency and enhance throughput in purposes like pc imaginative and prescient, pure language processing, and suggestion methods.

- Picture and audio preprocessing: The L4 GPU excels in parallel processing, which is vital for data-intensive duties like picture and audio preprocessing. For instance, picture or video decoding and transformations will profit from the GPUs.

- Coaching for deep studying fashions: L4 GPU is extremely environment friendly for coaching comparatively smaller-sized deep studying fashions with fewer parameters (lower than 1B)

Tips on how to Get Began

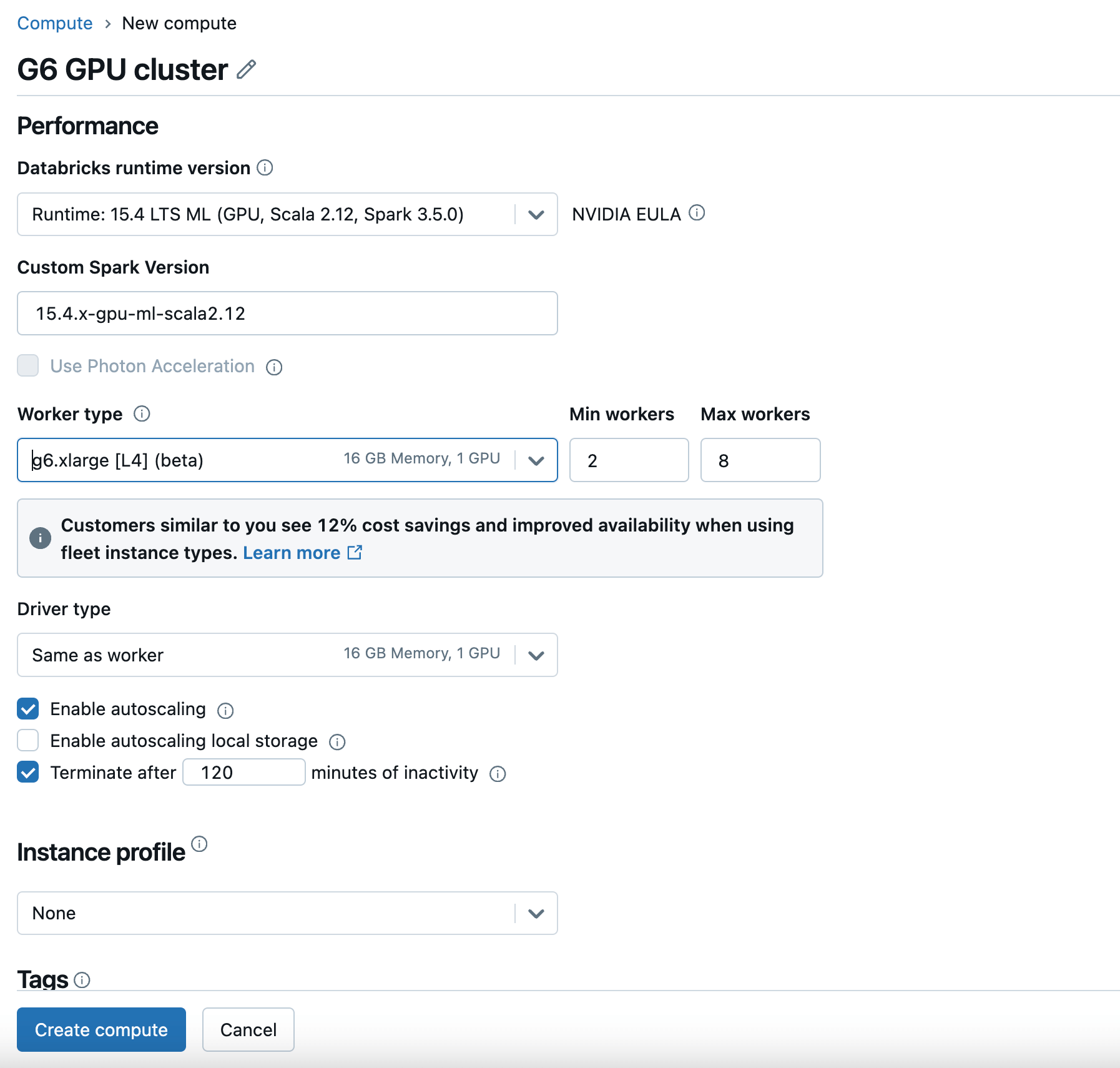

To begin utilizing G6 GPU cases on Databricks, merely create a brand new compute with a GPU-enabled Databricks Runtime Model and select G6 because the Employee Sort and Driver Sort. For particulars, examine the Databricks documentation.

G6 cases can be found now within the AWS US East (N. Virginia and Ohio) and US West (Oregon) areas. You might examine the AWS documentation for extra obtainable areas sooner or later.

Trying Forward

The addition of G6 GPU help on AWS is among the many steps we’re taking to make sure that Databricks stays on the forefront of AI and information analytics innovation. We acknowledge that our prospects are wanting to benefit from cutting-edge platform capabilities and achieve insights from their proprietary information. We are going to proceed to help extra GPU occasion sorts, resembling Gr6 and P5e cases, and extra GPU sorts, like AMD. Our objective is to help AI compute improvements as they turn out to be obtainable to our prospects.

Conclusion

Whether or not you’re a researcher who desires to prepare DL fashions like suggestion methods, an information scientist who desires to run DL batch inferences together with your information from UC, or an information engineer who desires to course of your video and audio information, this newest integration ensures that Databricks continues to supply a sturdy, future-ready platform for all of your information and AI wants.

Get began at this time and expertise the following stage of efficiency on your information and machine studying workloads on Databricks.