Word: for necessary background on vector search, see half 1 of our Introduction to Semantic Search: From Key phrases to Vectors.

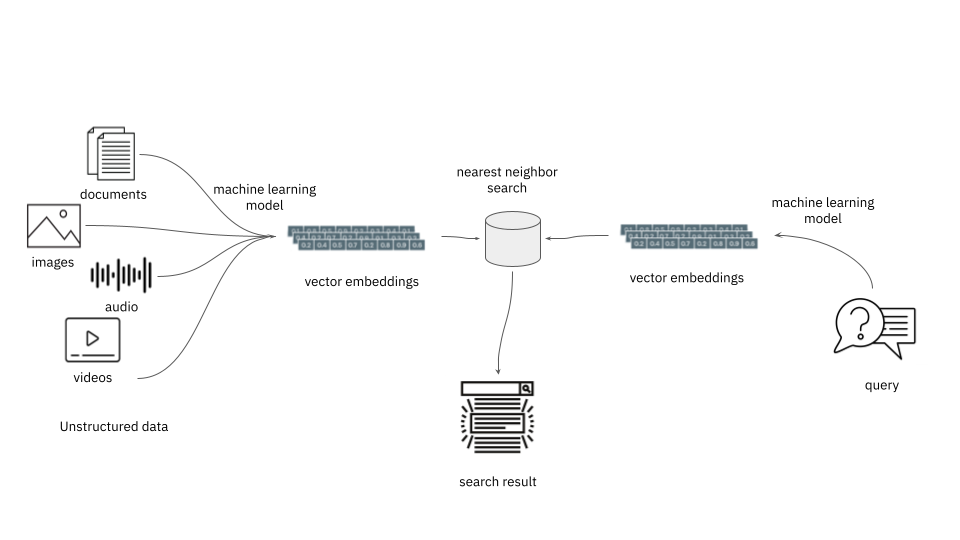

When constructing a vector search app, you’re going to finish up managing loads of vectors, also called embeddings. And one of the crucial widespread operations in these apps is discovering different close by vectors. A vector database not solely shops embeddings but additionally facilitates such widespread search operations over them.

The rationale why discovering close by vectors is beneficial is that semantically related gadgets find yourself shut to one another within the embedding house. In different phrases, discovering the closest neighbors is the operation used to search out related gadgets. With embedding schemes obtainable for multilingual textual content, pictures, sounds, information, and lots of different use circumstances, it is a compelling function.

Producing Embeddings

A key choice level in creating a semantic search app that makes use of vectors is selecting which embedding service to make use of. Each merchandise you wish to search on will should be processed to provide an embedding, as will each question. Relying in your workload, there could also be vital overhead concerned in making ready these embeddings. If the embedding supplier is within the cloud, then the provision of your system—even for queries—will depend upon the provision of the supplier.

This can be a choice that ought to be given due consideration, since altering embeddings will usually entail repopulating the entire database, an costly proposition. Totally different fashions produce embeddings in a unique embedding house so embeddings are sometimes not comparable when generated with completely different fashions. Some vector databases, nonetheless, will enable a number of embeddings to be saved for a given merchandise.

One fashionable cloud-hosted embedding service for textual content is OpenAI’s Ada v2. It prices a few cents to course of one million tokens and is extensively used throughout completely different industries. Google, Microsoft, HuggingFace, and others additionally present on-line choices.

In case your information is simply too delicate to ship outdoors your partitions, or if system availability is of paramount concern, it’s potential to regionally produce embeddings. Some fashionable libraries to do that embody SentenceTransformers, GenSim, and a number of other Pure Language Processing (NLP) frameworks.

For content material aside from textual content, there are all kinds of embedding fashions potential. For instance, SentenceTransfomers permits pictures and textual content to be in the identical embedding house, so an app might discover pictures just like phrases, and vice versa. A bunch of various fashions can be found, and it is a quickly rising space of growth.

Nearest Neighbor Search

What exactly is supposed by “close by” vectors? To find out if vectors are semantically related (or completely different), you have to to compute distances, with a operate often known as a distance measure. (You might even see this additionally referred to as a metric, which has a stricter definition; in apply, the phrases are sometimes used interchangeably.) Usually, a vector database may have optimized indexes based mostly on a set of accessible measures. Right here’s a couple of of the widespread ones:

A direct, straight-line distance between two factors is known as a Euclidean distance metric, or typically L2, and is extensively supported. The calculation in two dimensions, utilizing x and y to characterize the change alongside an axis, is sqrt(x^2 + y^2)—however needless to say precise vectors might have 1000’s of dimensions or extra, and all of these phrases should be computed over.

One other is the Manhattan distance metric, typically referred to as L1. That is like Euclidean in case you skip all of the multiplications and sq. root, in different phrases, in the identical notation as earlier than, merely abs(x) + abs(y). Consider it like the gap you’d must stroll, following solely right-angle paths on a grid.

In some circumstances, the angle between two vectors can be utilized as a measure. A dot product, or internal product, is the mathematical instrument used on this case, and a few {hardware} is specifically optimized for these calculations. It incorporates the angle between vectors in addition to their lengths. In distinction, a cosine measure or cosine similarity accounts for angles alone, producing a worth between 1.0 (vectors pointing the identical path) to 0 (vectors orthogonal) to -1.0 (vectors 180 levels aside).

There are fairly a couple of specialised distance metrics, however these are much less generally applied “out of the field.” Many vector databases enable for customized distance metrics to be plugged into the system.

Which distance measure do you have to select? Usually, the documentation for an embedding mannequin will say what to make use of—it is best to observe such recommendation. In any other case, Euclidean is an efficient start line, except you could have particular causes to assume in any other case. It might be price experimenting with completely different distance measures to see which one works greatest in your software.

With out some intelligent methods, to search out the closest level in embedding house, within the worst case, the database would want to calculate the gap measure between a goal vector and each different vector within the system, then kind the ensuing checklist. This rapidly will get out of hand as the dimensions of the database grows. In consequence, all production-level databases embody approximate nearest neighbor (ANN) algorithms. These commerce off a tiny little bit of accuracy for significantly better efficiency. Analysis into ANN algorithms stays a scorching subject, and a powerful implementation of 1 could be a key issue within the selection of a vector database.

Deciding on a Vector Database

Now that we’ve mentioned among the key parts that vector databases help–storing embeddings and computing vector similarity–how do you have to go about choosing a database to your app?

Search efficiency, measured by the point wanted to resolve queries in opposition to vector indexes, is a main consideration right here. It’s price understanding how a database implements approximate nearest neighbor indexing and matching, since this may have an effect on the efficiency and scale of your software. But in addition examine replace efficiency, the latency between including new vectors and having them seem within the outcomes. Querying and ingesting vector information on the identical time might have efficiency implications as effectively, so make sure to check this in case you anticipate to do each concurrently.

Have a good suggestion of the dimensions of your challenge and how briskly you anticipate your customers and vector information to develop. What number of embeddings are you going to want to retailer? Billion-scale vector search is definitely possible at the moment. Can your vector database scale to deal with the QPS necessities of your software? Does efficiency degrade as the dimensions of the vector information will increase? Whereas it issues much less what database is used for prototyping, it would be best to give deeper consideration to what it will take to get your vector search app into manufacturing.

Vector search purposes typically want metadata filtering as effectively, so it’s a good suggestion to know how that filtering is carried out, and the way environment friendly it’s, when researching vector databases. Does the database pre-filter, post-filter or search and filter in a single step in an effort to filter vector search outcomes utilizing metadata? Totally different approaches may have completely different implications for the effectivity of your vector search.

One factor typically neglected about vector databases is that in addition they should be good databases! People who do an excellent job dealing with content material and metadata on the required scale ought to be on the high of your checklist. Your evaluation wants to incorporate considerations widespread to all databases, reminiscent of entry controls, ease of administration, reliability and availability, and working prices.

Conclusion

In all probability the most typical use case at the moment for vector databases is complementing Massive Language Fashions (LLMs) as a part of an AI-driven workflow. These are highly effective instruments, for which the trade is barely scratching the floor of what’s potential. Be warned: This wonderful expertise is more likely to encourage you with contemporary concepts about new purposes and potentialities to your search stack and your small business.

Find out how Rockset helps vector search right here.