Since its introduction in 2012, Amazon DynamoDB has been one of the crucial in style NoSQL databases within the cloud. DynamoDB, not like a standard RDBMS, scales horizontally, obviating the necessity for cautious capability planning, resharding, and database upkeep. Consequently, DynamoDB is the database of selection for firms constructing event-driven architectures and user-friendly, performant functions at scale. As such, DynamoDB is central to many fashionable functions in advert tech, gaming, IoT, and monetary providers.

Nevertheless, whereas DynamoDB is nice for real-time transactions it doesn’t do as properly for analytics workloads. Analytics workloads are the place Rockset shines. To allow these workloads, Rockset supplies a completely managed sync to DynamoDB tables with its built-in connector. The information from DynamoDB is routinely listed in an inverted index, a column index and a row index which may then be queried rapidly and effectively.

As such, the DynamoDB connector is considered one of our most generally used knowledge connectors. We see customers transfer large quantities of information–TBs price of information–utilizing the DynamoDB connector. Given the dimensions of the use, we quickly uncovered shortcomings with our connector.

How the DynamoDB Connector Presently Works with Scan API

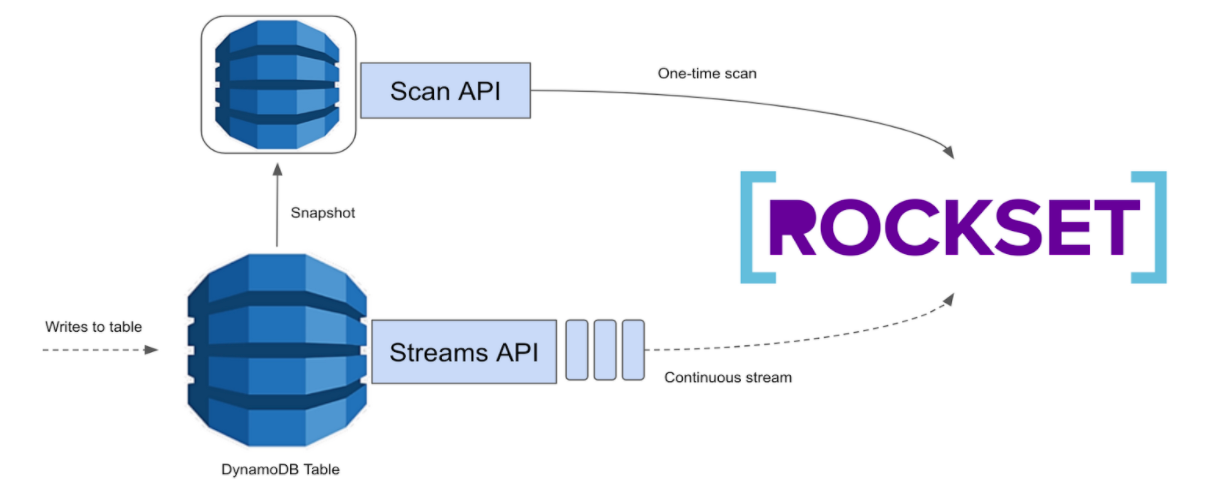

At a excessive stage, we ingest knowledge into Rockset utilizing the present connector in two phases:

- Preliminary Dump: This part makes use of DynamoDB’s Scan API for a one-time scan of the whole desk

- Streaming: This part makes use of DynamoDB’s Streams API and consumes steady updates made to a DynamoDB desk in a streaming vogue.

Roughly, the preliminary dump provides us a snapshot of the info, on which the updates from the streaming part apply. Whereas the preliminary dump utilizing the Scan API works properly for small sizes, it doesn’t all the time do properly for big knowledge dumps.

There are two most important points with DynamoDB’s preliminary dump because it stands at this time:

- Unconfigurable phase sizes: Dynamo doesn’t all the time steadiness segments uniformly, typically resulting in a straggler phase that’s inordinately bigger than the others. As a result of parallelism is at phase granularity, we’ve seen straggler segments improve the whole ingestion time for a number of customers in manufacturing.

- Mounted Dynamo stream retention: DynamoDB Streams seize change information in a log for as much as 24 hours. Which means that if the preliminary dump takes longer than 24 hours the shards that had been checkpointed at first of the preliminary dump could have expired by then, resulting in knowledge loss.

Bettering the DynamoDB Connector with Export to S3

When AWS introduced the launch of latest performance that lets you export DynamoDB desk knowledge to Amazon S3, we began evaluating this strategy to see if this is able to assist overcome the shortcomings with the older strategy.

At a excessive stage, as an alternative of utilizing the Scan API to get a snapshot of the info, we use the brand new export desk to S3 performance. Whereas not a drop-in substitute for the Scan API, we tweaked the streaming part which, along with the export to S3, is the premise of our new connector.

Whereas the previous connector took virtually 20 hours to ingest 1TB finish to finish with manufacturing workload operating on the DynamoDB desk, the brand new connector takes solely about 1 hour, finish to finish. What’s extra, ingesting 20TB from DynamoDB takes solely 3.5 hours, finish to finish! All it is advisable present is an S3 bucket!

Advantages of the brand new strategy:

- Doesn’t have an effect on the provisioned learn capability, and thus any manufacturing workload, operating on the DynamoDB desk

- The export course of is rather a lot sooner than customized table-scan options

- S3 duties might be configured to unfold the load evenly in order that we don’t should take care of a closely imbalanced phase like with DynamoDB

- Checkpointing with S3 comes without cost (we only in the near past constructed help for this)

We’re opening up entry for public beta, and can’t wait so that you can take this for a spin! Signal-up right here.

Completely happy ingesting and completely happy querying!