Giant Language Fashions (LLMs) can be on the core of many groundbreaking AI options for enterprise organizations. Listed here are just some examples of the advantages of utilizing LLMs within the enterprise for each inside and exterior use circumstances:

Optimize Prices. LLMs deployed as customer-facing chatbots can reply to incessantly requested questions and easy queries. These allow customer support representatives to focus their time and a focus on extra high-value interactions, resulting in a extra cost-efficient service mannequin.

Save Time. LLMs deployed as inside enterprise-specific brokers may help staff discover inside documentation, information, and different firm data to assist organizations simply extract and summarize vital inside content material.

Enhance Productiveness. LLMs deployed as code assistants speed up developer effectivity inside a company, guaranteeing that code meets requirements and coding finest practices.

A number of LLMs are publicly accessible by means of APIs from OpenAI, Anthropic, AWS, and others, which give builders immediate entry to industry-leading fashions which might be able to performing most generalized duties. Nonetheless, these LLM endpoints typically can’t be utilized by enterprises for a number of causes:

- Personal Information Sources: Enterprises typically want an LLM that is aware of the place and entry inside firm information, and customers typically can’t share this information with an open LLM.

- Firm-specific Formatting: LLMs are generally required to offer a really nuanced formatted response particular to an enterprise’s wants, or meet a company’s coding requirements.

- Internet hosting Prices: Even when a company desires to host one in all these giant generic fashions in their very own information facilities, they’re typically restricted to the compute sources accessible for internet hosting these fashions.

The Want for Advantageous Tuning

Advantageous tuning solves these points. Advantageous tuning entails one other spherical of coaching for a selected mannequin to assist information the output of LLMs to fulfill particular requirements of a company. Given some instance information, LLMs can rapidly be taught new content material that wasn’t accessible through the preliminary coaching of the bottom mannequin. The advantages of utilizing fine-tuned fashions in a company are quite a few:

- Meet Coding Codecs and Requirements: Advantageous tuning an LLM ensures the mannequin generates particular coding codecs and requirements, or gives particular actions that may be taken from buyer enter to an agent chatbot.

- Scale back Coaching Time: AI practitioners can practice “adapters” for base fashions, which solely practice a selected subset of parameters inside the LLM. These adapters could be swapped freely between each other on the identical mannequin, so a single mannequin can carry out completely different roles primarily based on the adapters.

- Obtain Value Advantages: Smaller fashions which might be fine-tuned for a selected job or use case carry out simply in addition to or higher than a “generalized” bigger LLM that’s an order of magnitude costlier to function.

Though the advantages of wonderful tuning are substantial, the method of getting ready, coaching, evaluating, and deploying fine-tuned LLMs is a prolonged LLMOps workflow that organizations deal with in another way. This results in compatibility points with no consistency in information and mannequin group.

Introducing Cloudera’s Advantageous Tuning Studio

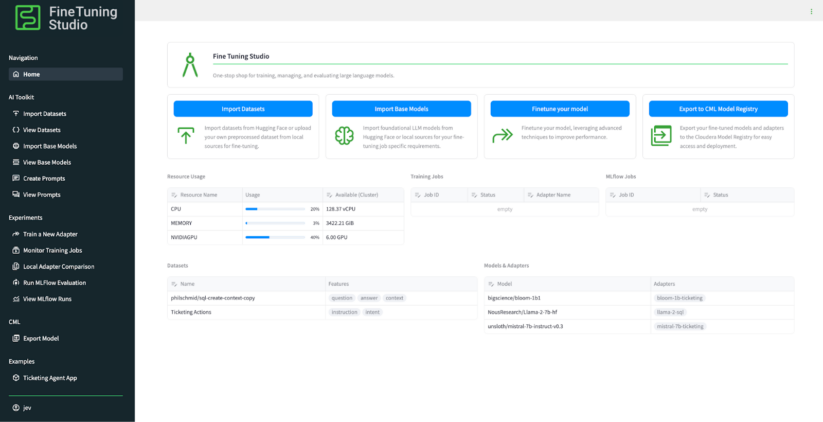

To assist treatment these points, Cloudera introduces Advantageous Tuning Studio, a one-stop-shop studio utility that covers your complete workflow and lifecycle of wonderful tuning, evaluating, and deploying fine-tuned LLMs in Cloudera’s AI Workbench. Now, builders, information scientists, resolution engineers, and all AI practitioners working inside Cloudera’s AI ecosystem can simply set up information, fashions, coaching jobs, and evaluations associated to wonderful tuning LLMs.

Advantageous Tuning Studio Key Capabilities

As soon as the Advantageous Tuning Studio is deployed to any enterprise’s Cloudera’s AI Workbench, customers acquire immediate entry to highly effective instruments inside Advantageous Tuning Studio to assist set up information, check prompts, practice adapters for LLMs, and consider the efficiency of those fine-tuning jobs:

- Observe all of your sources for wonderful tuning and evaluating LLMs. Advantageous Tuning Studio permits customers to trace the placement of all datasets, fashions, and mannequin adapters for coaching and analysis. Datasets which might be imported from each Hugging Face and from a Cloudera AI mission straight (akin to a customized CSV), in addition to fashions imported from a number of sources akin to Hugging Face and Cloudera’s Mannequin Registry, are all synergistically organized and can be utilized all through the device – utterly agnostic of their kind or location.

- Construct and check coaching and inference prompts. Advantageous Tuning Studio ships with highly effective immediate templating options, so customers can construct and check the efficiency of various prompts to feed into completely different fashions and mannequin adapters throughout coaching. Customers can evaluate the efficiency of various prompts on completely different fashions.

- Prepare new adapters for an LLM. Advantageous Tuning Studio makes coaching new adapters for an LLM a breeze. Customers can configure coaching jobs proper inside the UI, both depart coaching jobs with their smart defaults or absolutely configure a coaching job right down to customized parameters that may be despatched to the coaching job itself. The coaching jobs use Cloudera’s Workbench compute sources, and customers can monitor the efficiency of a coaching job inside the UI. Moreover, Advantageous Tuning Studio comes with deep MLFlow experiments integration, so each metric associated to a wonderful tuning job could be considered in Cloudera AI’s Experiments view.

- Consider the efficiency of skilled LLMs. Advantageous Tuning Studio ships with a number of methods to check the efficiency of a skilled mannequin and evaluate the efficiency of fashions between each other, all inside the UI. Advantageous Tuning Studio gives methods to rapidly check the efficiency of a skilled adapter with easy spot-checking, and likewise gives full MLFlow-based evaluations evaluating the efficiency of various fashions to 1 one other utilizing industry-standard metrics. The analysis instruments constructed into the Advantageous Tuning Studio permit AI professionals to make sure the protection and efficiency of a mannequin earlier than it ever reaches manufacturing.

- Deploy skilled LLMs to manufacturing environments. Advantageous Tuning Studio ships natively with deep integrations with Cloudera’s AI suite of instruments to deploy, host, and monitor LLMs. Customers can instantly export a fine-tuned mannequin as a Cloudera Machine Studying Mannequin endpoint, which may then be utilized in production-ready workflows. Customers may also export wonderful tuned fashions into Cloudera’s new Mannequin Registry, which may later be used to deploy to Cloudera AI’s new AI Inferencing service working inside a Workspace.

- No-code, low-code, and all-code options. Advantageous Tuning Studio ships with a handy Python shopper that makes calls to the Advantageous Tuning Studio’s core server. Which means that information scientists can construct and develop their very own coaching scripts whereas nonetheless utilizing Advantageous Tuning Studio’s compute and organizational capabilities. Anybody with any ability stage can leverage the ability of Advantageous Tuning Studio with or with out code.

An Finish-to-Finish Instance: Ticketing Assist Agent

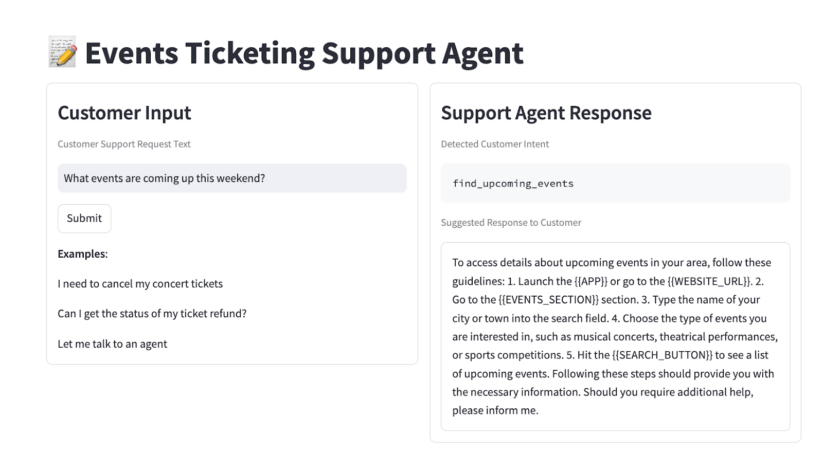

To point out how simple it’s for GenAI builders to construct and deploy a production-ready utility, let’s check out an end-to-end instance: wonderful tuning an occasion ticketing buyer assist agent. The objective is to wonderful tune a small, cost-effective mannequin that , primarily based on buyer enter, can extract an applicable “motion” (suppose API name) that the downstream system ought to take for the shopper. Given the associated fee constraints of internet hosting and infrastructure, the objective is to wonderful tune a mannequin that’s sufficiently small to host on a client GPU and might present the identical accuracy as a bigger mannequin.

Information Preparation. For this instance, we’ll use the bitext/Bitext-events-ticketing-llm-chatbot-training-dataset dataset accessible on HuggingFace, which comprises pairs of buyer enter and desired intent/motion output for a wide range of buyer inputs. We will import this dataset on the Import Datasets web page.

Mannequin Choice. To maintain our inference footprint small, we’ll use the bigscience/bloom-1b1 mannequin as our base mannequin, which can be accessible on HuggingFace. We will import this mannequin straight from the Import Base Fashions web page. The objective is to coach an adapter for this base mannequin that offers it higher predictive capabilities for our particular dataset.

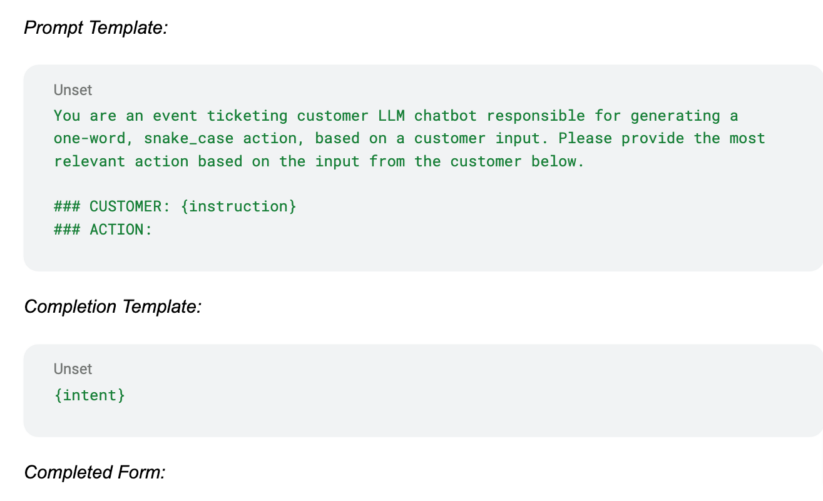

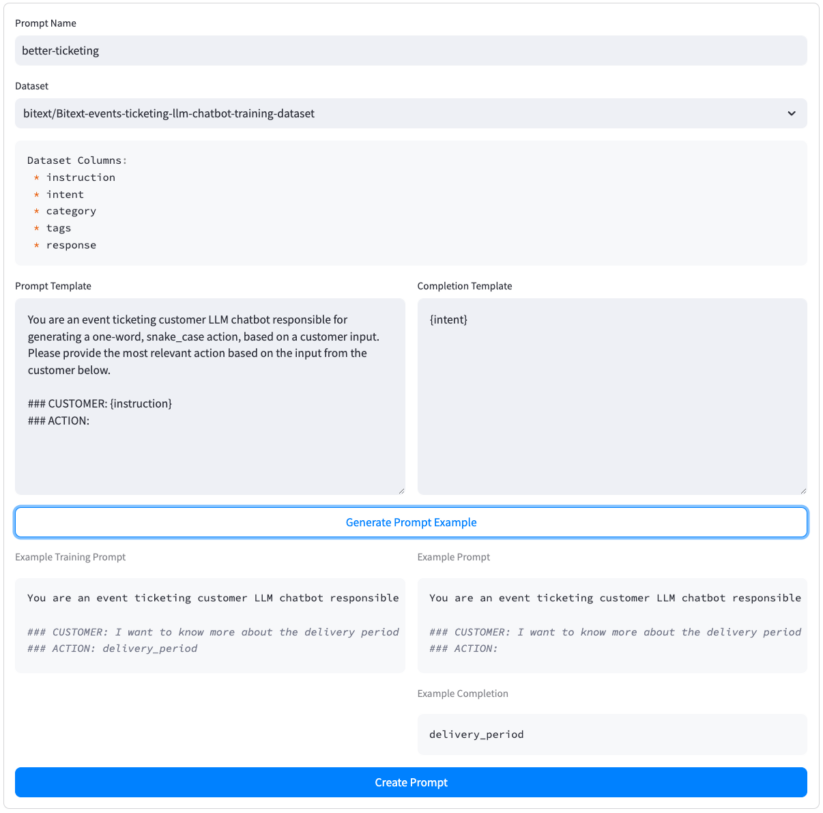

Making a Coaching Immediate. Subsequent, we’ll create a immediate for each coaching and inference. We will make the most of this immediate to offer the mannequin extra context on potential choices. Let’s title our immediate better-ticketing and use our bitext dataset as the bottom dataset for the immediate. The Create Prompts web page permits us to create a immediate “template” primarily based on the options accessible within the dataset. We will then check the immediate in opposition to the dataset to verify the whole lot is working correctly. As soon as the whole lot seems to be good, we hit Create Immediate, which prompts our immediate utilization all through the device. Right here’s our immediate template, which makes use of the instruction and intent fields from our dataset:

Prepare a New Adapter. With a dataset, mannequin, and immediate chosen, let’s practice a brand new adapter for our bloom-1b1 mannequin, which may extra precisely deal with buyer requests. On the Prepare a New Adapter web page, we will fill out all related fields, together with the title of our new adapter, dataset to coach on, and coaching immediate to make use of. For this instance, we had two L40S GPUs accessible for coaching, so we selected the Multi Node coaching kind. We skilled on 2 epochs of the dataset and skilled on 90% of the dataset, leaving 10% accessible for analysis and testing.

Monitor the Coaching Job. On the Monitor Coaching Jobs web page we will monitor the standing of our coaching job, and likewise comply with the deep hyperlink to the Cloudera Machine Studying Job on to view log outputs. Two L40S GPUs and a couple of epochs of our bitext dataset accomplished coaching in solely 10 minutes.

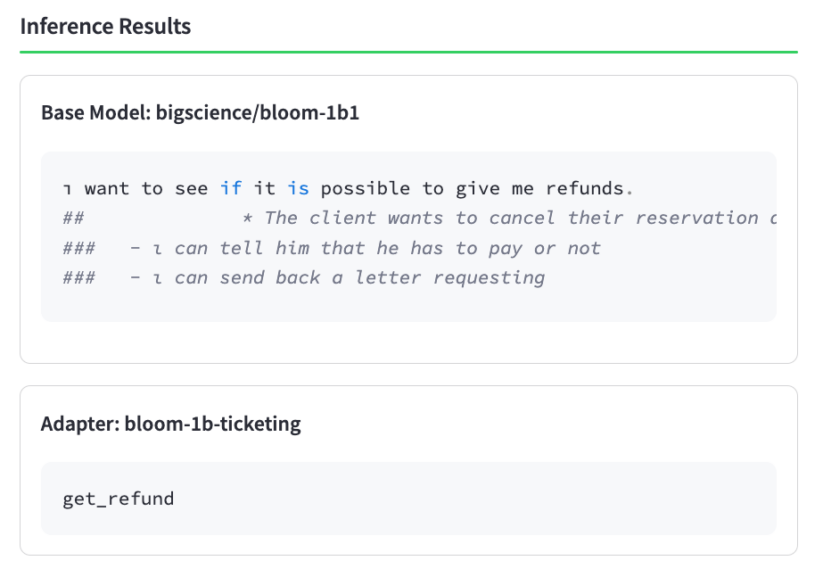

Test Adapter Efficiency. As soon as the coaching job completes, it’s useful to “spot verify” the efficiency of the adapter to be sure that it was skilled efficiently. Advantageous Tuning Studio presents a Native Adapter Comparability web page to rapidly evaluate the efficiency of a immediate between a base mannequin and a skilled adapter. Let’s attempt a easy buyer enter, pulled straight from the bitext dataset: “i’ve to get a refund i want help”, the place the corresponding desired output motion is get_refund. Trying on the output of the bottom mannequin in comparison with the skilled adapter, it’s clear that coaching had a constructive influence on our adapter!

Consider the Adapter. Now that we’ve carried out a spot verify to verify coaching accomplished efficiently, let’s take a deeper look into the efficiency of the adapter. We will consider the efficiency in opposition to the “check” portion of the dataset from the Run MLFlow Analysis web page. This gives a extra in-depth analysis of any chosen fashions and adapters. For this instance, we’ll evaluate the efficiency of 1) simply the bigscience/bloom-1b1 base mannequin, 2) the identical base mannequin with our newly skilled better-ticketing adapter activated, and at last 3) a bigger mistral-7b-instruct mannequin.

As we will see, our rougueL metric (just like an actual match however extra advanced) of the 1B mannequin adapter is considerably larger than the identical metric for an untrained 7B mannequin. So simple as that, we skilled an adapter for a small, cost-effective mannequin that outperforms a considerably bigger mannequin. Though the bigger 7B mannequin could carry out higher on generalized duties, the non-fine-tuned 7B mannequin has not been skilled on the accessible “actions” that the mannequin can take given a selected buyer enter, and subsequently wouldn’t carry out in addition to our fine-tuned 1B mannequin in a manufacturing atmosphere.

Accelerating Advantageous Tuned LLMs to Manufacturing

As we noticed, Advantageous Tuning Studio permits anybody of any ability stage to coach a mannequin for any enterprise-specific use case. Now, prospects can incorporate cost-effective, high-performance, fine-tuned LLMs into their production-ready AI workflows extra simply than ever, and expose fashions to prospects whereas guaranteeing security and compliance. After coaching a mannequin, customers can use the Export Mannequin function to export skilled adapters as a Cloudera Machine Studying mannequin endpoint, which is a production-ready mannequin internet hosting service accessible to Cloudera AI (previously often called Cloudera Machine Studying) prospects. Advantageous Tuning Studio ships with a strong instance utility displaying how simple it’s to include a mannequin that was skilled inside Advantageous Tuning Studio right into a full-fledged manufacturing AI utility.

How can I Get Began with Advantageous Tuning Studio?

Cloudera’s Advantageous Tuning Studio is out there to Cloudera AI prospects as an Accelerator for Machine Studying Initiatives (AMP), proper from Cloudera’s AMP catalog. Set up and take a look at Advantageous Tuning Studio following the directions for deploying this AMP proper from the workspace.

Wish to see what’s underneath the hood? For superior customers, contributors, or different customers who wish to view or modify Advantageous Tuning Studio, the mission is hosted on Cloudera’s github right here: https://github.com/cloudera/CML_AMP_LLM_Fine_Tuning_Studio.

Get Began In the present day!

Cloudera is worked up to be engaged on the forefront of coaching, evaluating, and deploying LLMs to prospects in production-ready environments. Advantageous Tuning Studio is underneath steady growth and the staff is keen to proceed offering prospects with a streamlined strategy to wonderful tune any mannequin, on any information, for any enterprise utility. Get began at present in your wonderful tuning wants, and Cloudera AI’s staff is able to help in fulfilling your enterprise’s imaginative and prescient for AI-ready purposes to turn out to be a actuality.